Installation

Download the installer for your platform and run it — no build tools, no terminal commands, no dependencies to install.

Step 1: Download

Go to the GitHub Releases page and download the latest version for your OS:

| Platform | File | Notes |

|---|---|---|

| Windows | .msi or .exe | Double-click to install. You may need to click "More info" → "Run anyway" on the SmartScreen prompt. |

| macOS | .dmg | Open the DMG, drag ContextuAI Solo to Applications. On first launch, right-click → Open to bypass Gatekeeper. |

| Linux | .AppImage or .deb | For AppImage: chmod +x then run. For .deb: sudo dpkg -i contextuai-solo.deb |

Step 2: Launch

Open ContextuAI Solo from your applications. On first launch, the Setup Wizard guides you through choosing an AI provider and configuring your profile. That's it — you're ready to go.

Building from Source (Advanced)

If you prefer to build from source (contributors, developers):

Setup Wizard

On first launch, a 3-step wizard walks you through configuration:

- Profile — Enter your name, business name, and select your industry (16 options: SaaS, E-commerce, Healthcare, Finance, Education, Marketing, Consulting, Real Estate, Legal, Manufacturing, Non-Profit, Freelancing, and more)

- AI Provider — Choose how you want to run AI:

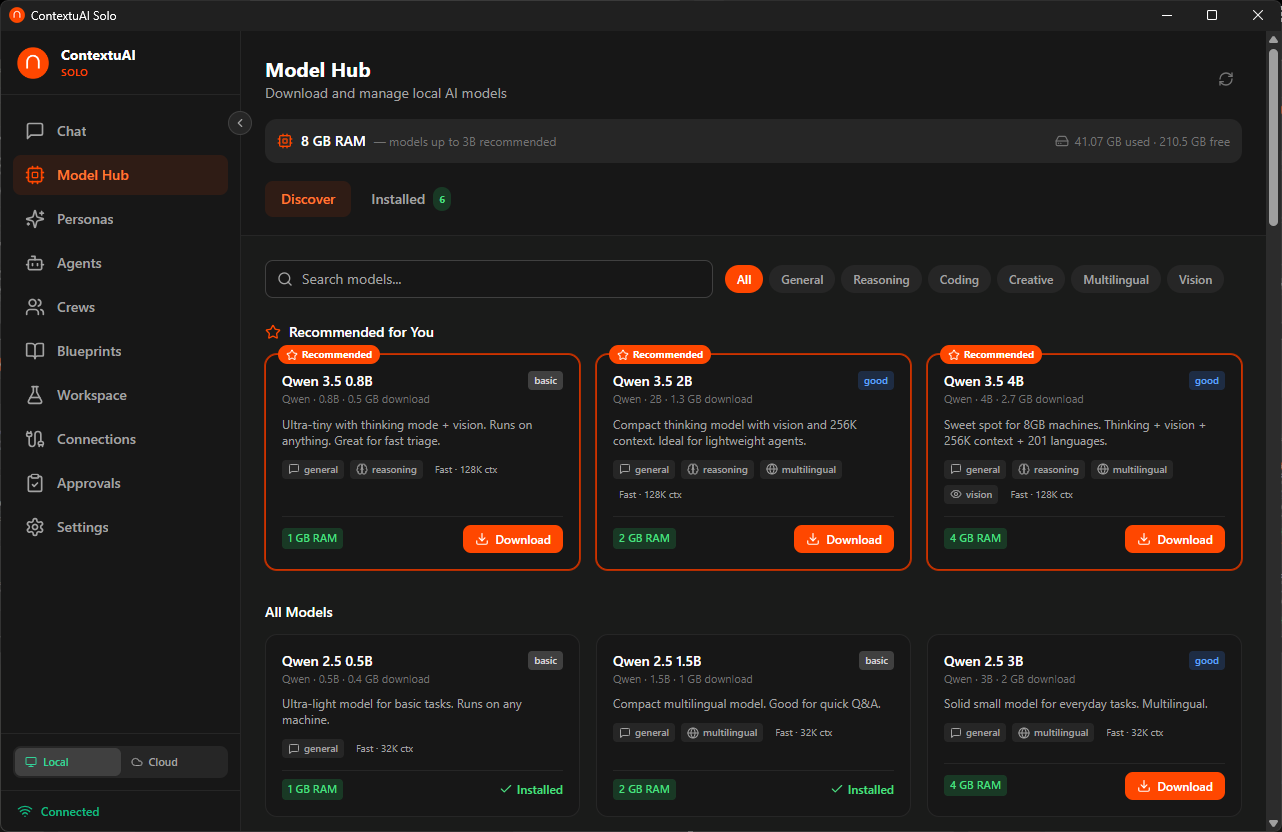

Built-in Local AI Free · No Setup

Solo ships with a built-in Model Hub — 39 GGUF models you can download and run directly inside the app. No API keys, no external tools, no Ollama, no Python — just pick a model, click download, and start chatting. Everything runs on your CPU, fully offline.

This is the fastest way to get started. The wizard lets you pick a recommended model based on your RAM:

Your RAM Recommended Model Download Size 4-8 GB Qwen 3.5 0.8B or Gemma 3 1B ~700 MB 8-16 GB Qwen 3 8B or DeepSeek R1 7B ~4-5 GB 16-32 GB Gemma 4 12B or Qwen 3 14B ~7-9 GB 32+ GB Gemma 4 27B or DeepSeek R1 32B ~16-20 GB New: Gemma 4 — Google's latest model family (April 2025). Gemma 4 12B rivals models twice its size on reasoning and instruction-following. Gemma 4 27B is one of the strongest open models available. Both run fully on CPU inside Solo — our top recommendation for 16GB+ machines.Cloud Providers BYOK

If you have API keys from cloud providers, you can add them for access to the latest frontier models:

- Anthropic Claude — Sonnet 4, Opus, Haiku (Get API key)

- OpenAI — GPT-4o, GPT-4o Mini, GPT-4 Turbo, o1-preview (Get API key)

- Google Gemini — 2.0 Flash, 2.0 Pro, 1.5 Flash (Get API key)

- AWS Bedrock — Claude and Titan models

- Ollama — If you already run Ollama locally, Solo can connect to it as an additional provider

You can use local + cloud together — switch between them anytime from the model dropdown in chat.

- Brand Voice — Define your target audience, content topics, and brand tone

Your First Chat

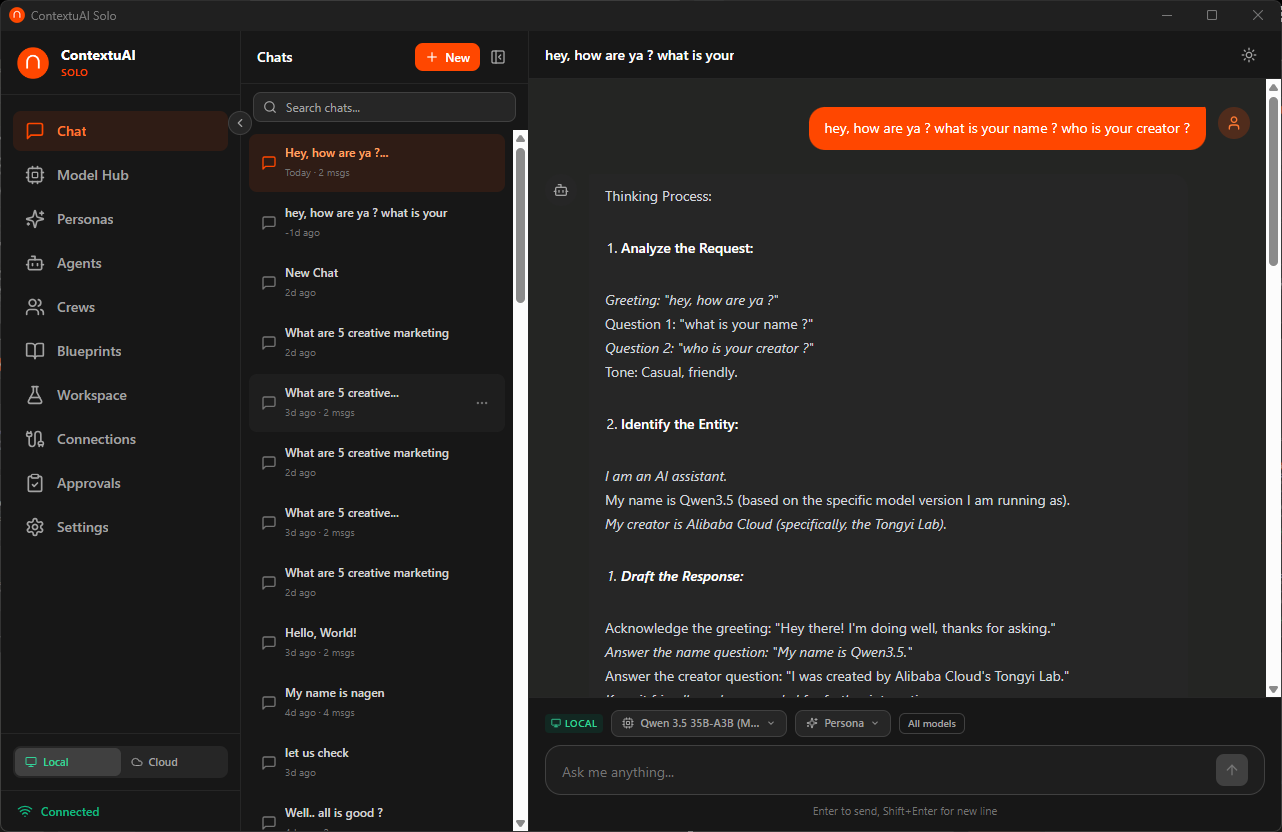

After the wizard, you land on the Chat page:

- Type a message in the input box at the bottom

- Select an AI model from the dropdown (shows provider badges: Local/Cloud)

- Optionally pick a persona to give the AI specialized context

- Press

Enterto send — the AI streams its response in real-time

Conversations are saved as sessions in the left sidebar, grouped by date with message counts.

The main chat interface with model selector, persona picker, and session sidebar

AI Chat

The chat interface is the heart of Solo:

- Multi-model support — Switch between providers and models mid-conversation using the dropdown

- Real-time streaming — Responses appear token-by-token; click the stop button to abort

- Session management — Create, rename, archive, delete, and search sessions from the sidebar

- Persona selection — Attach a persona to give the AI access to your database, API, or custom instructions

- Markdown rendering — Code blocks with syntax highlighting, tables, lists, and rich formatting

- Thinking mode — Models that support reasoning (Qwen 3, DeepSeek R1) show a collapsible thinking section

- Dark/Light mode — Toggle from the top bar; applies across all pages

Ctrl+N (or Cmd+N) to start a new chat instantly. Use Shift+Enter for a new line without sending.Model Hub

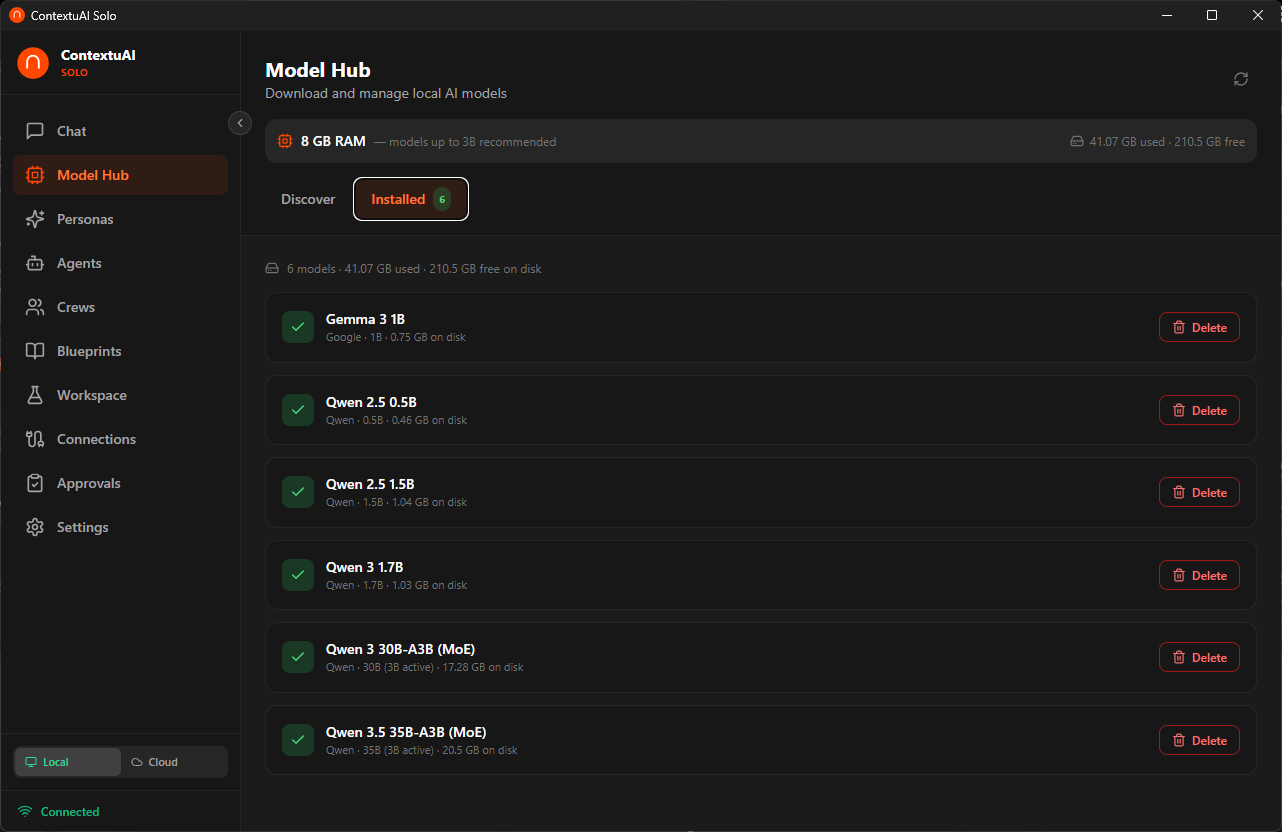

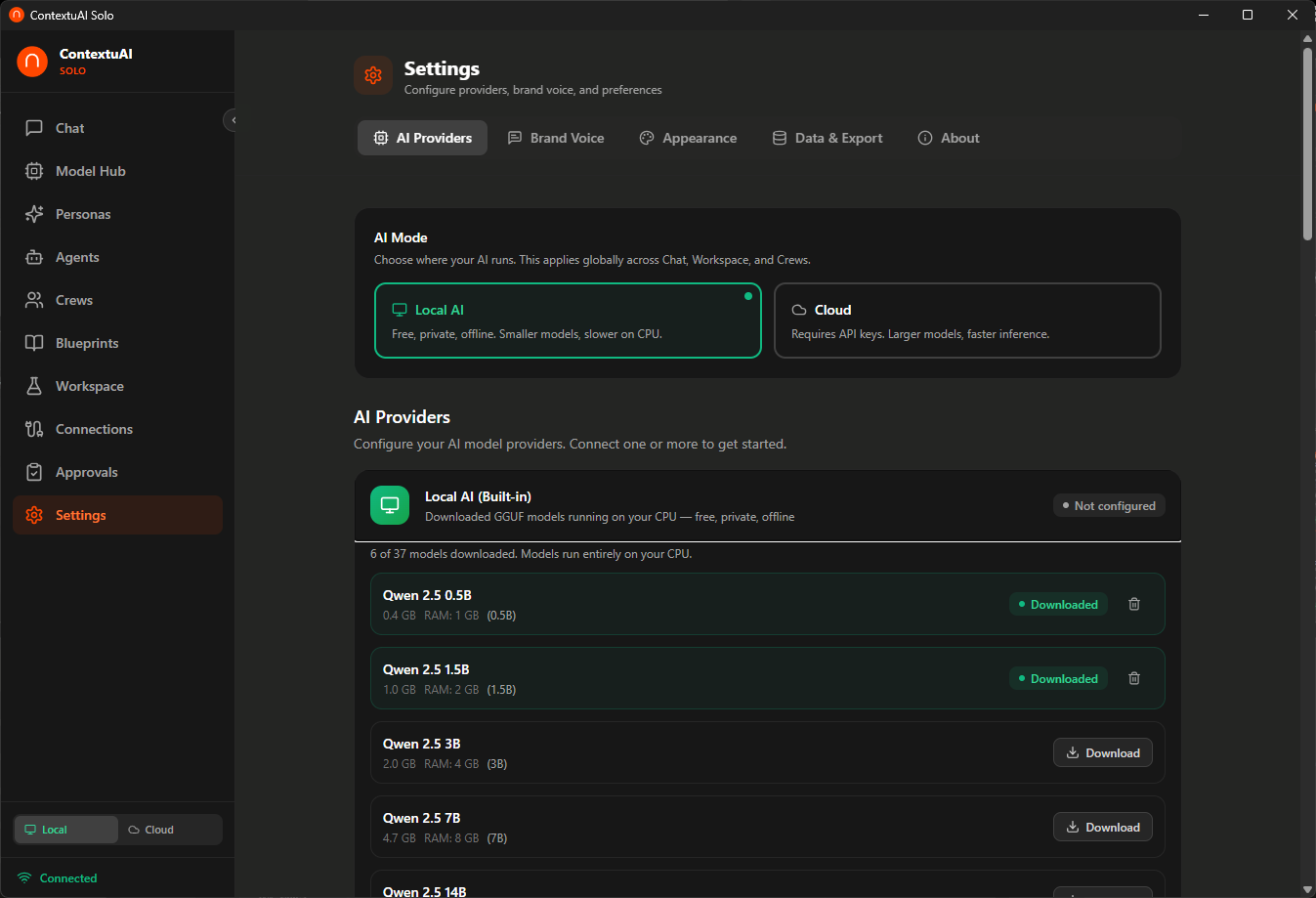

Configure cloud AI providers and download local models from Settings → AI Providers.

Browse and configure AI models — cloud providers and local GGUF models

Cloud Providers

Enter your API key for any supported provider. Click Test Connection to verify it works. Toggle the active provider to set your default model.

Local Models

Download GGUF models with one click. Solo auto-detects your RAM and recommends compatible models. Progress is shown in real-time during download.

Manage installed local models — sync status, storage usage, ready to chat

AI Mode Toggle

Switch between Local and Cloud mode. In local mode, all inference runs on your CPU — no internet required after model download.

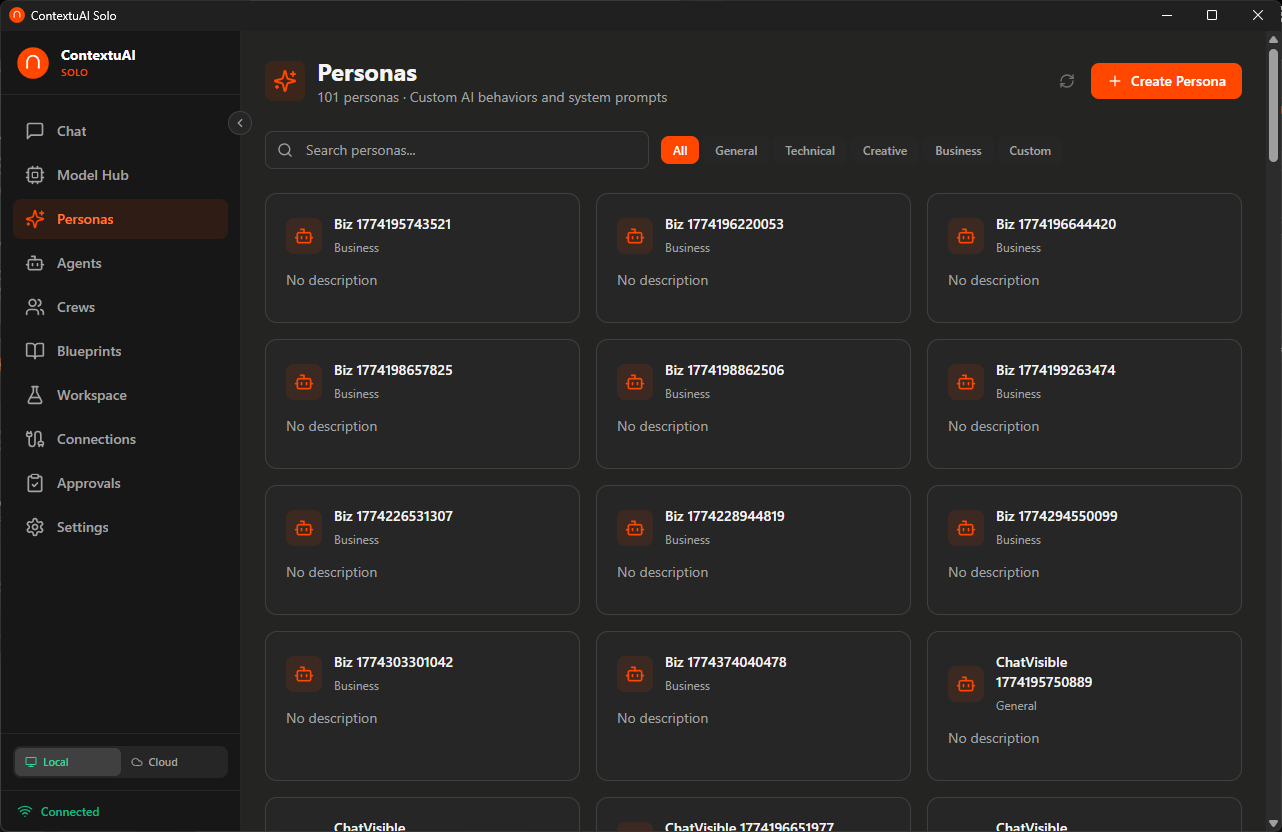

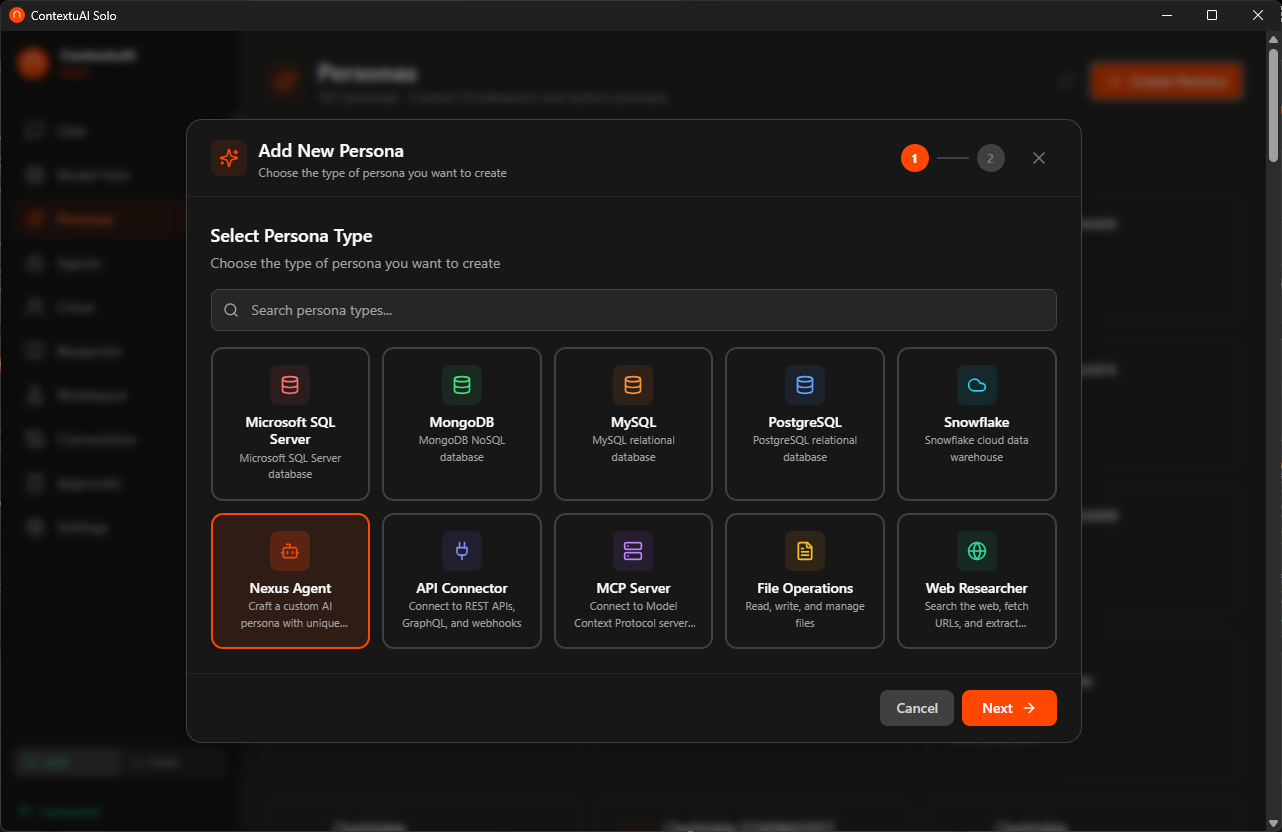

Personas

Personas are AI identities that connect to your real systems. Solo includes 12 persona types:

| Persona Type | What It Does |

|---|---|

| Nexus Agent | General-purpose AI with custom system prompts |

| Web Researcher | Search the web and scrape pages |

| PostgreSQL | Query PostgreSQL in natural language — AI writes the SQL |

| MySQL | Query MySQL databases with auto-generated SQL |

| MSSQL | Connect to Microsoft SQL Server |

| Snowflake | Query your Snowflake data warehouse |

| MongoDB | Query MongoDB document databases |

| GitHub | Browse repos, issues, and pull requests |

| GitLab | Access repos and CI/CD pipelines |

| API Connector | Call any REST API endpoint |

| File Operations | Read, write, and parse local files |

| Slack | Send and read messages in Slack channels |

Browse and manage your AI personas

Creating a Persona

Solo uses a 2-step wizard:

- Choose Type — Select from the persona type dropdown (each type shows an icon and description)

- Configure Details — Enter name, category, description, connection credentials, and system prompt

For database types, use the Test Connection button to verify credentials before saving.

Wizard-based persona creation with type selection and credential configuration

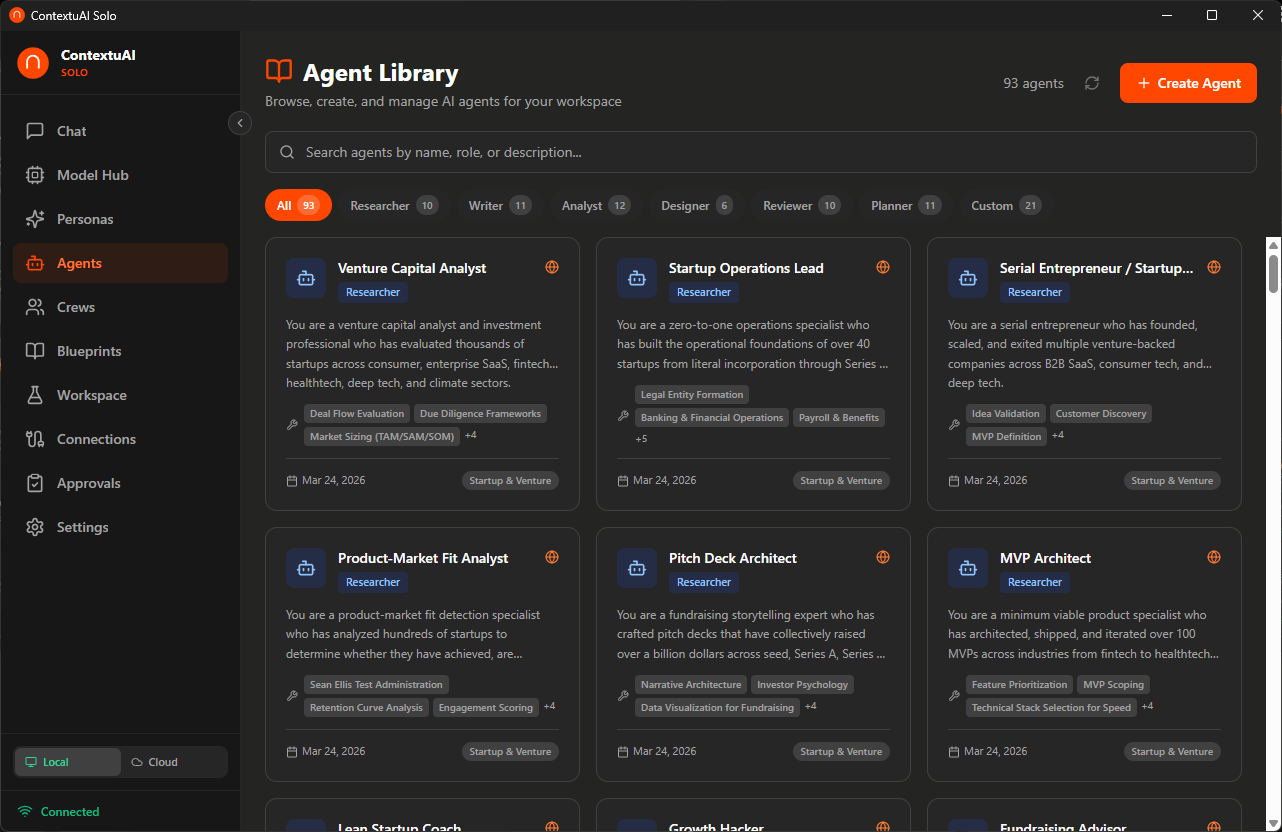

Agent Library

Solo ships with 93 pre-built business agents across 12 departments. Each has a specialized system prompt, recommended model, and tool configurations.

Browse 93 agents across 12 departments — search, filter by role, view details

| Department | Example Agents |

|---|---|

| C-Suite | CEO Strategic Advisor, CFO Financial Strategist, COO Operations Optimizer, CTO Technology Advisor |

| Marketing & Sales | Content Strategist, SEO Specialist, Social Media Manager, Brand Voice Designer, Email Campaign Builder |

| Finance & Operations | Financial Analyst, Budget Planner, Invoice Processor, Tax Advisor, Revenue Forecaster |

| Legal & Compliance | Contract Reviewer, Compliance Checker, IP Advisor, Privacy Policy Drafter |

| HR & People | Recruiter Assistant, Job Description Writer, Performance Review Helper |

| Design & UX | UI/UX Advisor, Brand Identity Designer, Presentation Builder, Color Palette Generator |

| Data & Analytics | Data Analyst, SQL Query Builder, Dashboard Designer, Statistical Modeler |

| IT & Security | DevOps Assistant, Security Auditor, Infrastructure Planner, Incident Response Helper |

| Product Management | Product Manager, Feature Prioritizer, User Story Writer, Roadmap Planner |

| Startup & Venture | Pitch Deck Builder, Business Model Canvas Creator, Go-to-Market Strategist |

| Operations | Process Optimizer, Supply Chain Analyst, Quality Assurance Planner |

| Specialized | Industry-specific agents and custom roles |

Using Agents

Browse by department or filter by role: Researcher, Writer, Analyst, Designer, Developer, Reviewer, Planner, or Custom. Click any agent card to view its full system prompt, tools, and model override.

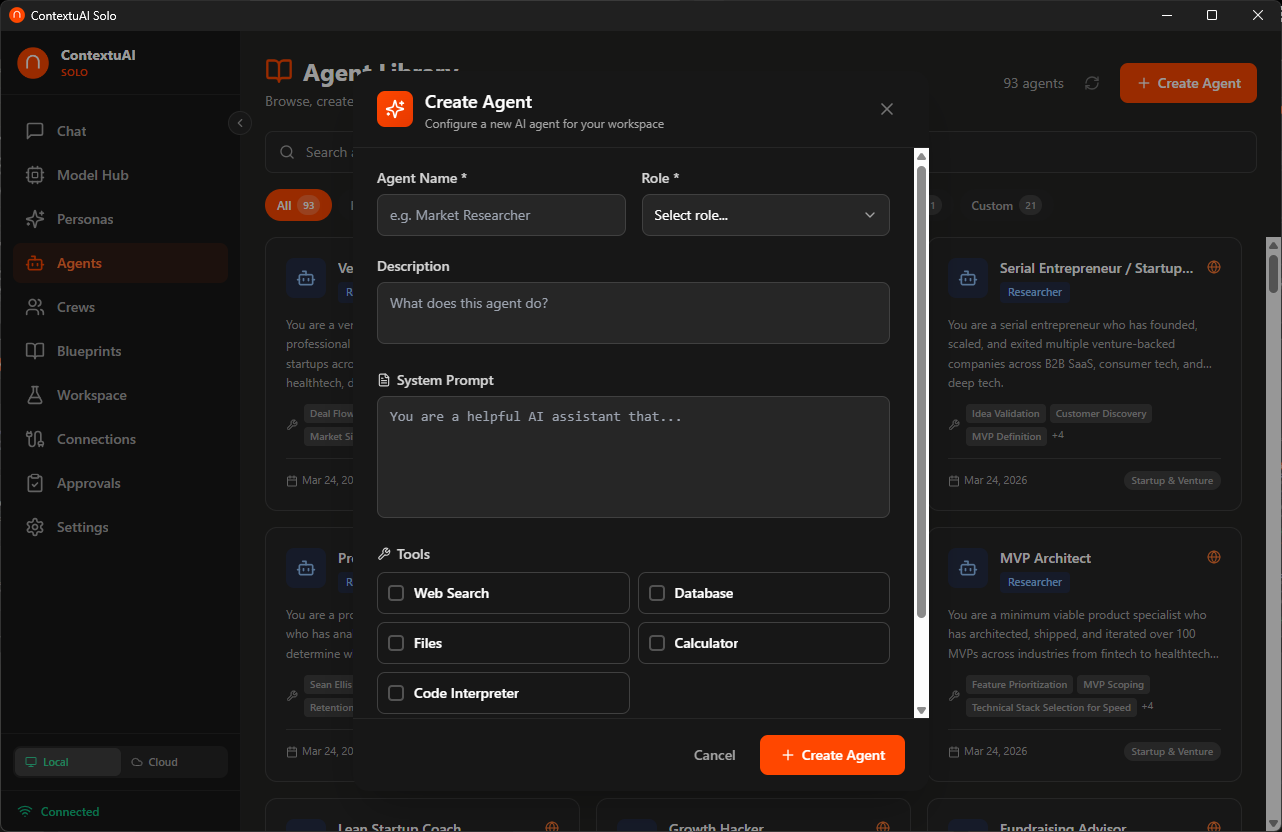

Creating a Custom Agent

Click + Create Agent and configure: name, role, description, system prompt, tool access, model preference, category, and public/private visibility.

Create a custom agent with role, system prompt, and tool configuration

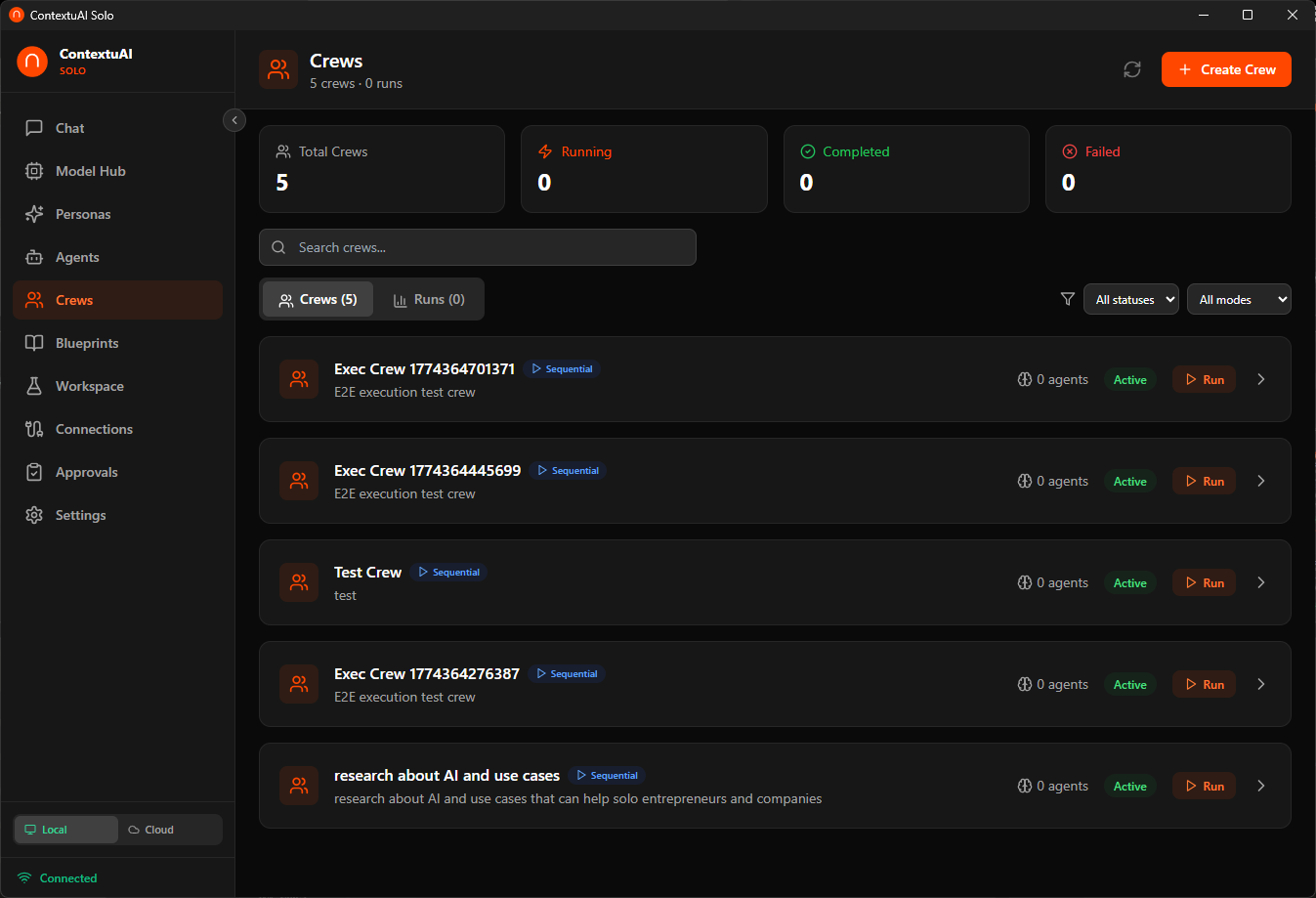

Crews

Crews are multi-agent teams that collaborate on complex tasks. The crew system uses a 5-step creation wizard.

Crew dashboard with stats (Total, Running, Completed, Failed) and crew list

5-Step Crew Wizard

- Crew Details — Name, description, optional blueprint template, and AI model selection

- Execution Mode — Choose how agents collaborate:

Mode How It Works Best For Sequential Agents run one after another; output feeds into the next Research → Write → Edit Parallel All agents run simultaneously on the same input SEO + Social + Email Pipeline Staged processing with checkpoints Draft → Review → Publish Autonomous A coordinator agent decides the flow dynamically Open-ended tasks - Agent Team — Add agents from the library or create custom ones inline. Reorder for sequential execution.

- Connections — Bind social channels (Telegram, Discord, LinkedIn) with an approval toggle

- Review — Confirm everything and click Create Crew

Running a Crew

Click Run on any crew. Enter your input text and watch real-time progress: status bar, duration, token count, cost, step timeline, and per-agent metrics. You can cancel a run mid-execution.

The Runs tab shows execution history with status, duration, and cost for every past run.

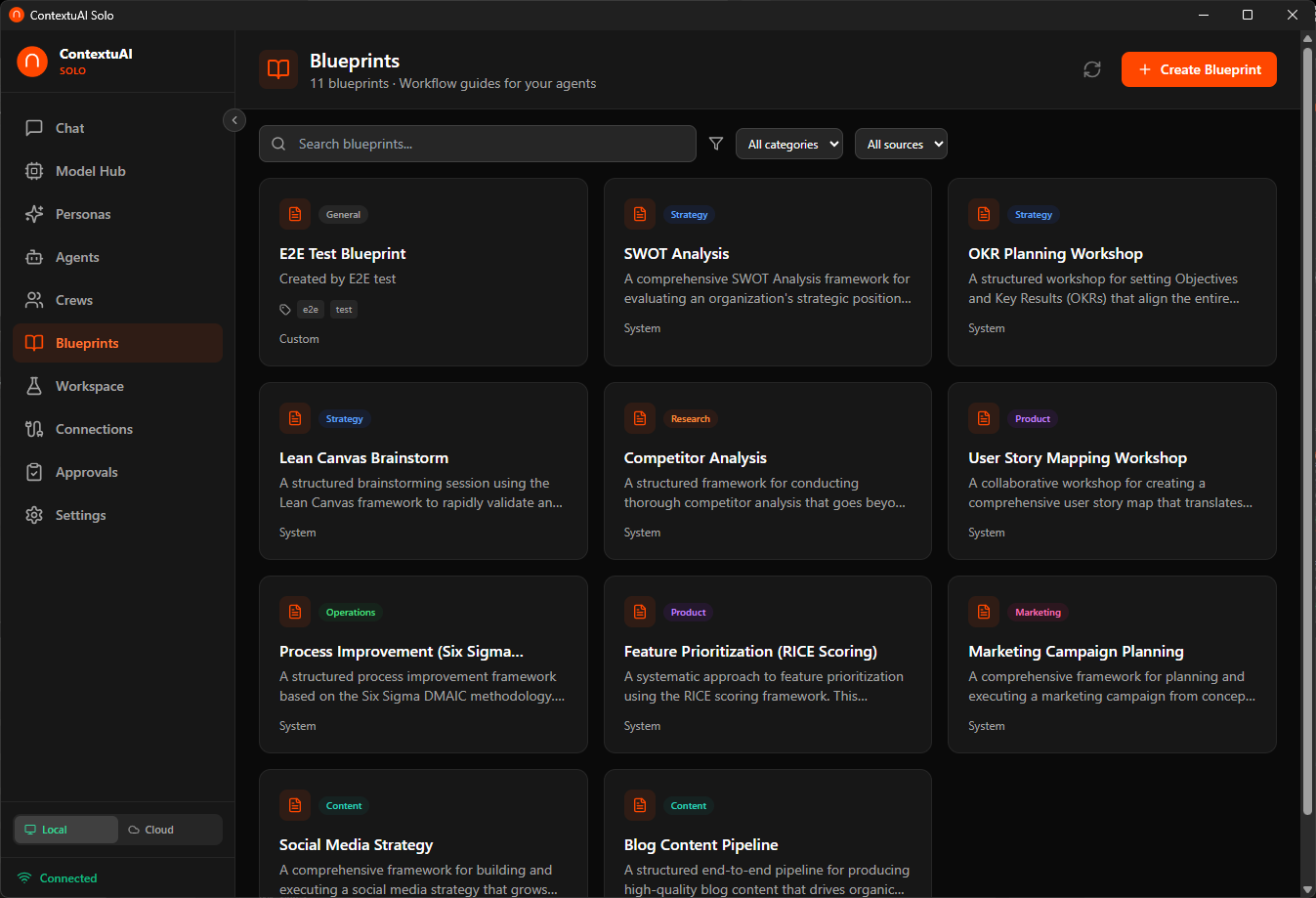

Blueprints

Solo ships with 15 pre-built templates to jumpstart your workflows.

Browse blueprint templates — search, filter by category, preview content

10 Workshop Blueprints

Multi-agent brainstorming sessions across 5 categories (Strategy, Content, Marketing, Product, Research):

- C-Suite Strategy Session

- Startup Pitch Review

- Marketing Campaign Planning

- Product Roadmap Workshop

- Financial Analysis Session

- Talent & Culture Review

- Legal & Compliance Audit

- Data Strategy Workshop

- Technology Partner Evaluation

- Custom Workshop

5 Team Templates

Pre-configured agent teams for engineering workflows:

- Migration Squad — Code migration across stacks

- MVP Builder — Rapid prototyping

- Code Reviewer — Quality and standards

- Full-Stack App — End-to-end application

- API Designer — REST schemas and design

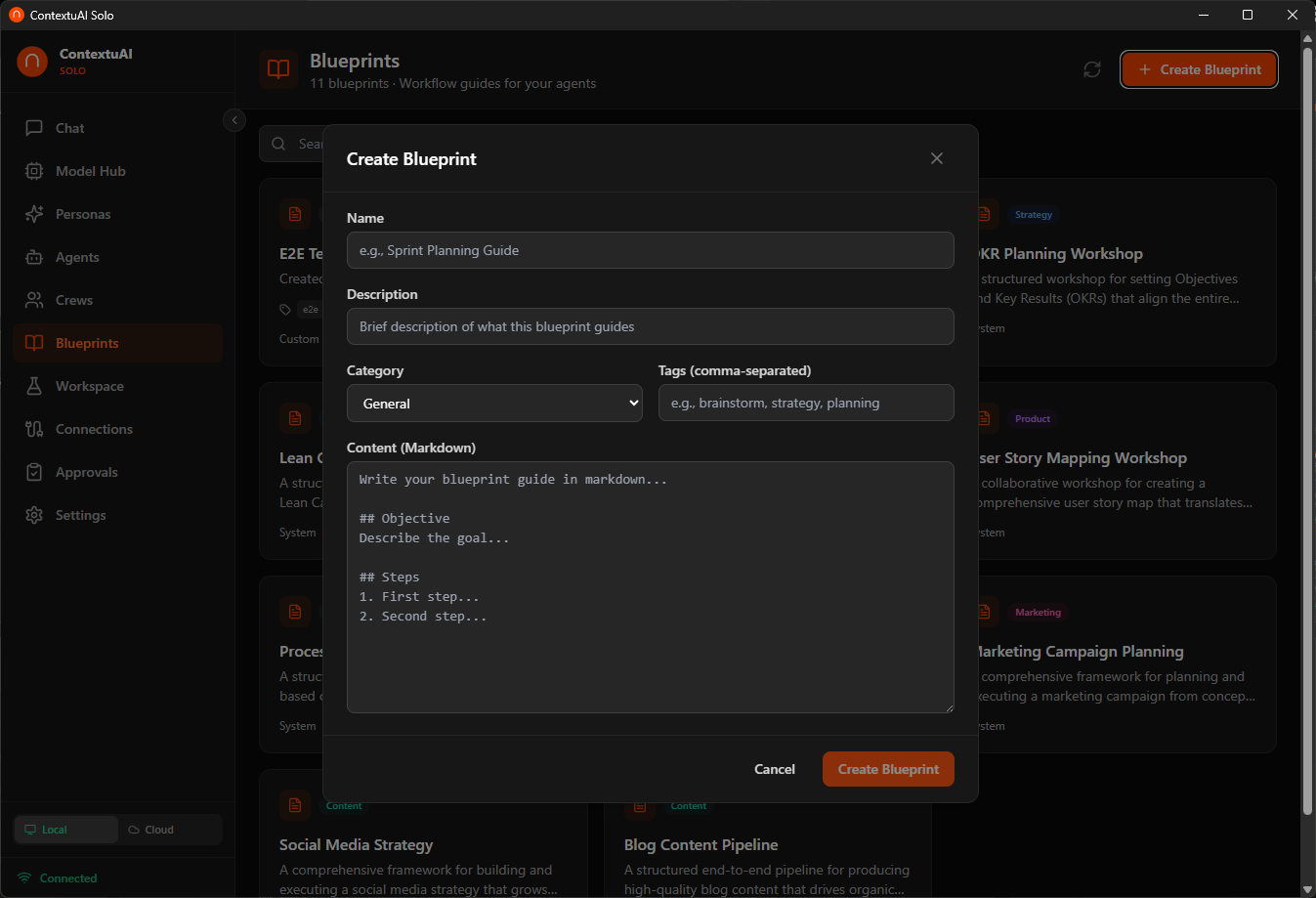

Creating Custom Blueprints

Click + Create Blueprint and define: name, description, category, tags, and markdown content. Your custom blueprints appear alongside the built-in ones.

Create a custom blueprint with markdown content and tags

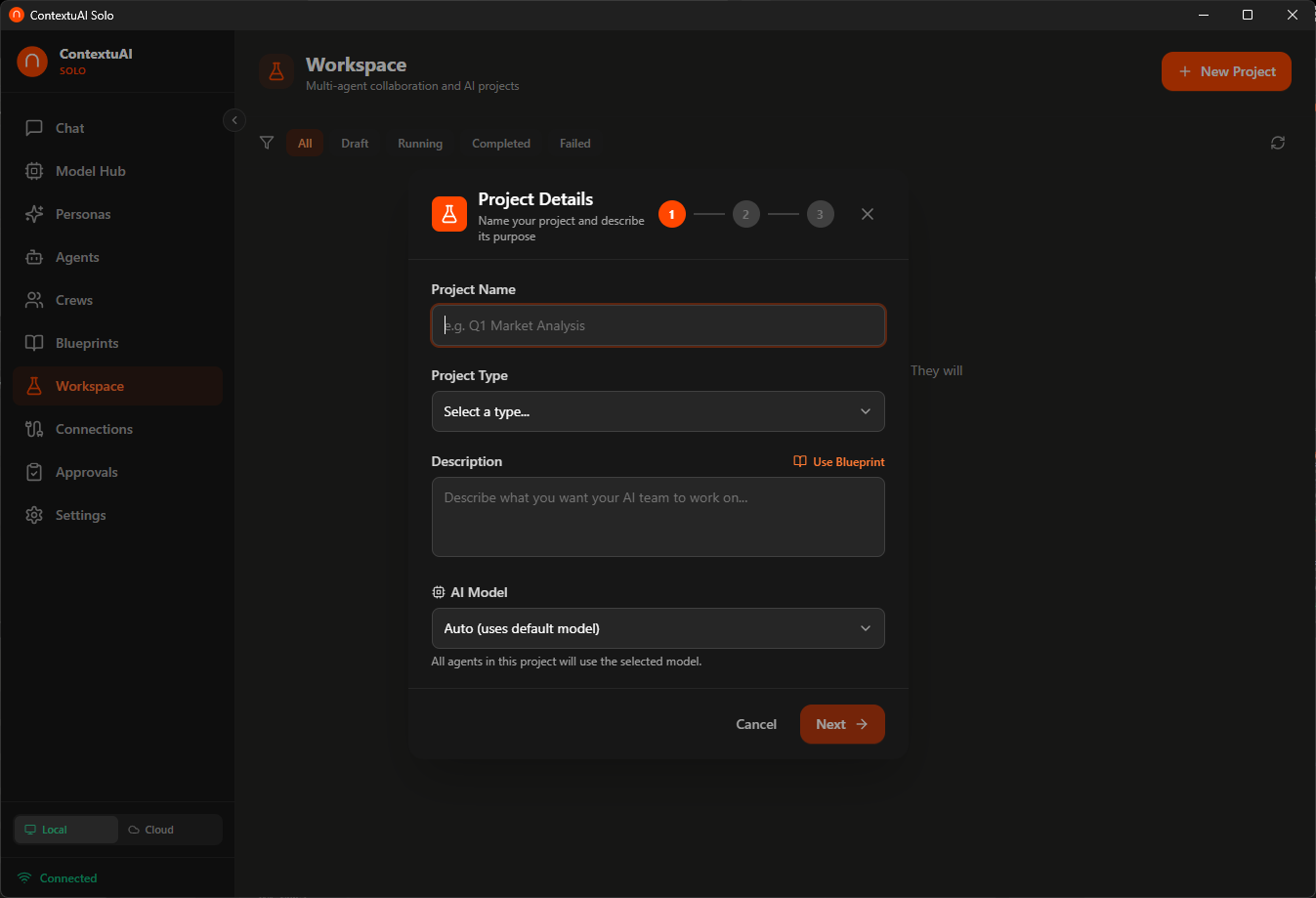

Workspace

The Workspace is for project-based multi-agent collaboration with structured outputs and exportable artifacts.

Project list with status badges — create, run, and review multi-agent projects

3-Step Project Wizard

- Project Details — Name, description, AI model, and optional blueprint template

- Agent Selection — Browse the 93-agent library, search, and check agents to add (count badge updates live)

- Review & Create — Confirm selections and launch

Viewing Results

Completed projects have two tabs:

- Discussion Tab — Step-by-step agent contributions in a threaded conversation view

- Compiled Output Tab — Final consolidated result with a copy button for easy export

Project statuses: Draft (not yet started), Running (agents working), Completed (results ready), Failed (error occurred).

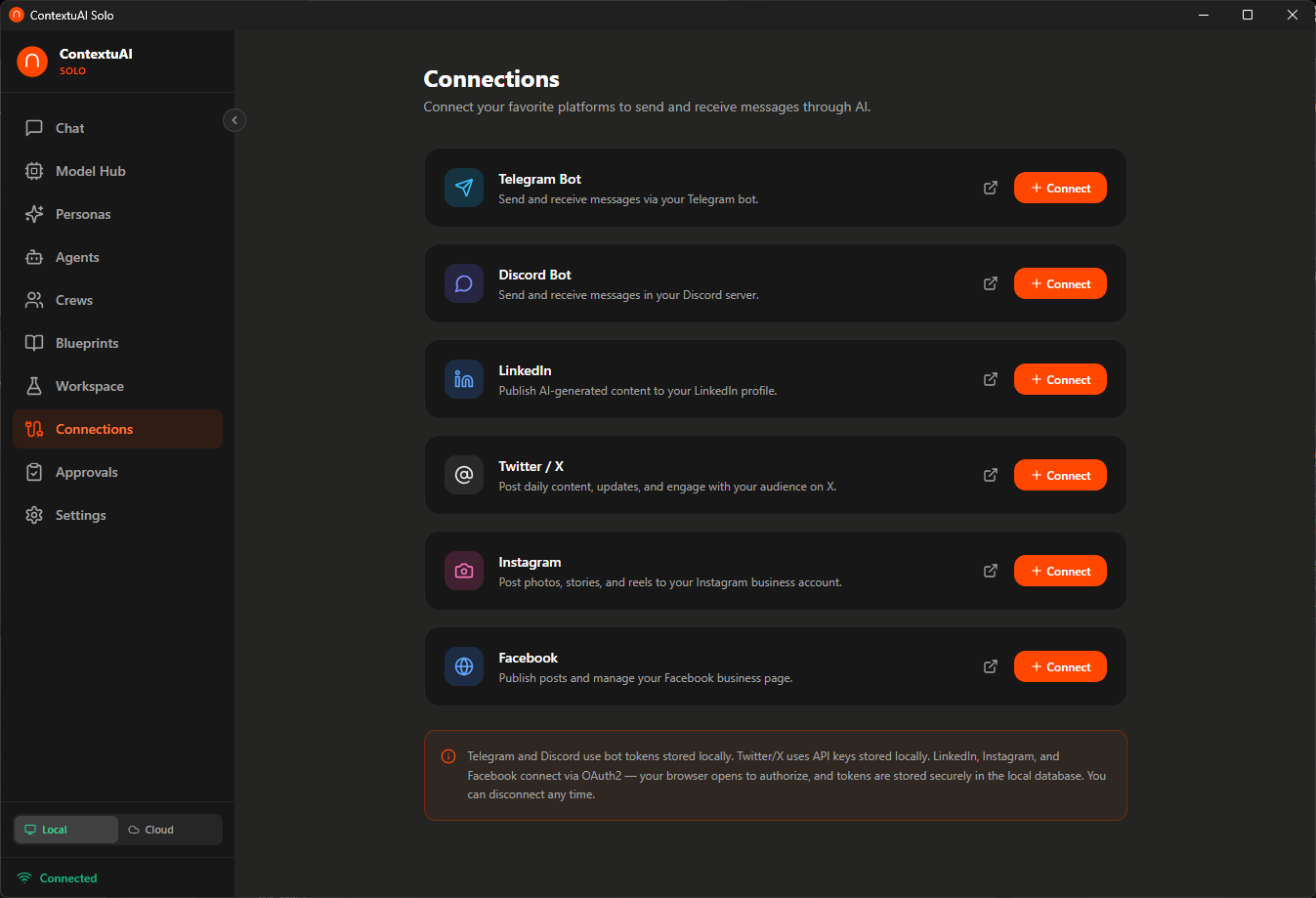

Connections

Connect Solo to external messaging platforms for automated content publishing and AI-powered responses.

Platform connection cards with status badges and direction toggles

| Platform | Auth Method | Direction | Status |

|---|---|---|---|

| Telegram Bot | Bot token | Inbound + Outbound | Live |

| Discord Bot | Bot token + Public Key + App ID | Inbound + Outbound | Live |

| OAuth 2.0 (Client ID/Secret) | Outbound only | Live | |

| Twitter/X | API Key + Secret + Access Tokens | Outbound | Coming Soon |

| OAuth (Business/Creator account) | Outbound | Coming Soon | |

| OAuth (Page required) | Outbound | Coming Soon |

Setting Up Telegram

- Open Telegram, search for @BotFather

- Send

/newbotand follow the prompts to get your bot token - In Solo, go to Connections → Telegram → paste the token

- Toggle Inbound/Outbound as needed and click Connect

- For inbound messages, set up a webhook with ngrok:

ngrok http 18741

Setting Up Discord

- Go to the Discord Developer Portal

- Create a New Application → go to Bot → copy the token

- Enable Privileged Gateway Intents (Message Content, Server Members)

- In Solo, paste the Bot Token, Public Key, and Application ID

Setting Up LinkedIn

- Create an app at LinkedIn Developers

- Request "Share on LinkedIn" access for your app

- In Solo, enter Client ID and Client Secret

- Click Connect — an OAuth browser window will open for authorization

Connected platforms can be bound to crews in Step 4 of the crew wizard, enabling automated content distribution with human-in-the-loop approval.

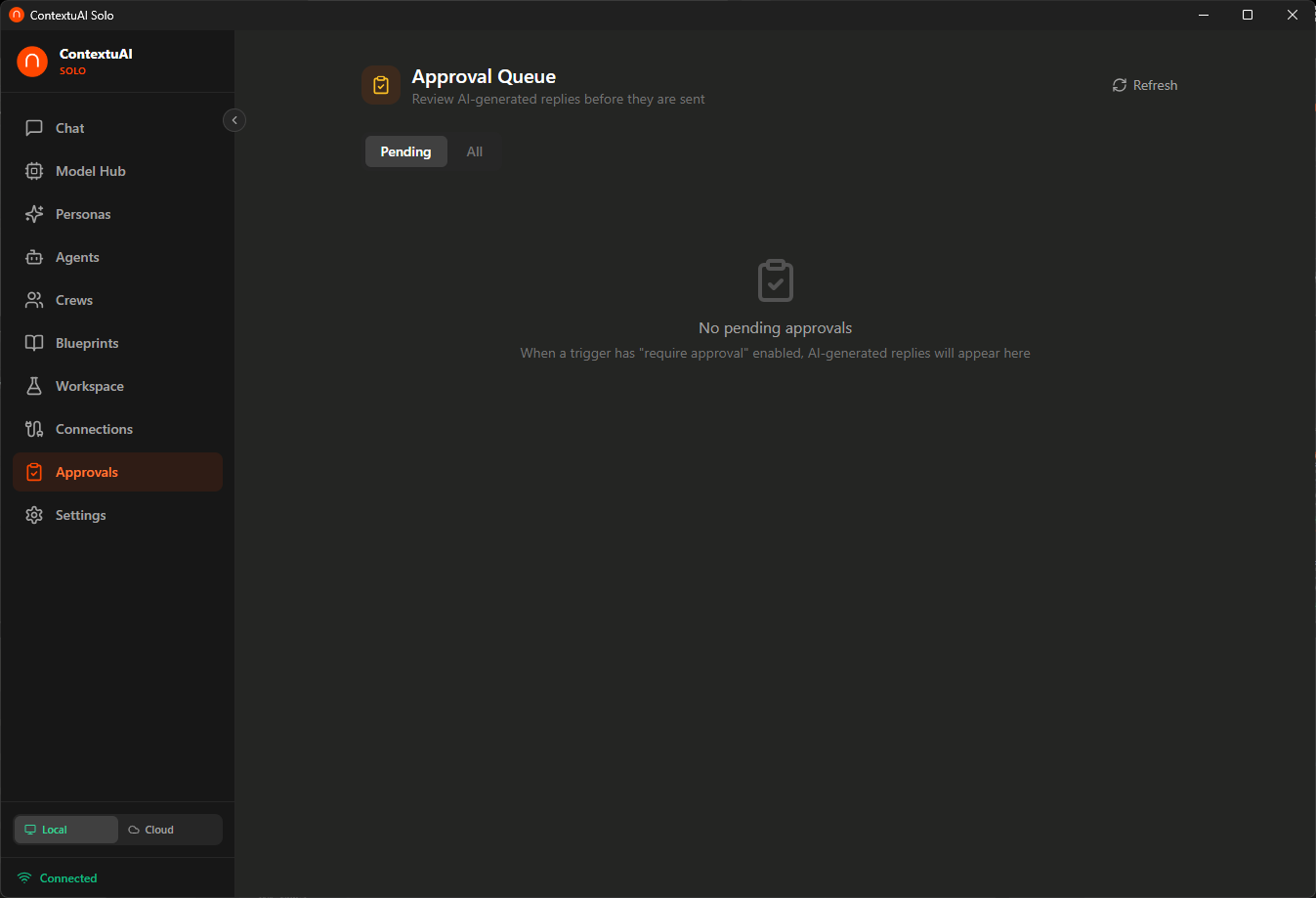

Approval Queue

When crews or agents auto-reply to incoming messages, every response lands in your approval queue first. Nothing gets sent without your explicit approval.

Review AI-drafted replies before they are sent — approve, edit, or reject

- Set up triggers — Link a channel (Telegram, Discord) to a crew or agent with "require approval" enabled

- AI drafts a reply — Incoming messages are processed by your AI; the draft appears in the queue

- Review & send — Edit the response if needed, then approve to send or reject to discard

Triggers support cooldown periods to prevent spam, and you can link a crew instead of a single agent for more sophisticated multi-step responses.

BYOK — Bring Your Own Key

Solo supports 5 AI providers. Configure in Settings → AI Providers:

| Provider | Models | Cost |

|---|---|---|

| Anthropic Claude | Sonnet 4, Opus, Haiku | BYOK |

| OpenAI | GPT-4o, GPT-4o Mini, GPT-4 Turbo, o1-preview | BYOK |

| Google Gemini | 2.0 Flash, 2.0 Pro, 1.5 Flash | BYOK |

| AWS Bedrock | Claude, Titan | BYOK |

| Ollama (Local) | 39 GGUF models | Free |

API keys are stored in your browser's localStorage. They are never sent to any server other than the respective AI provider.

Local Models

Solo includes a Model Hub with 39 GGUF models across 9 families. One-click download — no API key, no internet after download, no data leaving your machine.

RAM Requirements

| Your RAM | Models You Can Run |

|---|---|

| 4 GB | Qwen 3.5 0.8B, Gemma 3 1B, Llama 3.2 1B |

| 8 GB | Qwen 3 8B, DeepSeek R1 7B, Mistral 7B, Qwen 2.5 Coder 7B |

| 16 GB | Qwen 3 14B, Gemma 4 12B, Phi-4 14B, DeepSeek R1 14B |

| 32 GB | Qwen 3 32B, Gemma 4 27B, DeepSeek R1 32B, Gemma 3 27B |

| 48+ GB | Llama 3.1 70B, DeepSeek R1 70B |

9 Model Families

- Gemma 4 New · Recommended — Google's latest (April 2025). 12B and 27B variants. Best-in-class instruction following, reasoning, and multilingual support at its size. Our top pick for 16GB+ machines.

- Qwen 3.5 / 3 / 2.5 — Alibaba's versatile family. General chat, reasoning, and coding (Qwen 2.5 Coder is excellent for IDE integration). Wide range from 0.8B to 32B.

- DeepSeek R1 — Advanced reasoning with visible thinking mode. 7B to 70B. Great for analysis, math, and complex problem-solving.

- Gemma 3 — Google's previous generation. Still solid for lightweight tasks (1B to 27B).

- Llama 3 — Meta's open models. 1B to 70B. Strong general-purpose performance.

- Mistral — Fast and lightweight (7B). Good for quick responses on lower-spec machines.

- Phi-4 — Microsoft's 14B model. Punches above its weight on reasoning tasks.

Inference powered by llama-cpp-python — CPU-only, no GPU required. Models stored in ~/.contextuai-solo/models/.

Built-in Coding Server

Solo exposes an OpenAI-compatible API at localhost:18741/v1/chat/completions. Point your IDE at it for free, offline code completion.

IDE Setup

| IDE | How to Connect |

|---|---|

| VS Code | Continue or Copilot extension → set base URL to http://localhost:18741/v1 |

| Cursor | Settings → Models → Add custom model endpoint |

| Windsurf | OpenAI-compatible provider → set base URL |

| Aider | aider --openai-api-base http://localhost:18741/v1 |

| Any tool | Any tool that speaks the OpenAI API format — just change the base URL |

Settings

Access settings from the sidebar. 5 tabs:

Settings page with AI Providers, Brand Voice, Appearance, Data & Export, and About tabs

- AI Providers — Configure API keys, download local models, test connections, set active provider

- Brand Voice — Business name, industry, brand prompt, target audience, content topics

- Appearance — Theme (Light, Dark, System) and font size (Small, Medium, Large)

- Data & Export — Export/import database as JSON, clear all data

- About — App version, check for updates, technology stack links

Brand Voice

Configure your brand identity so AI-generated content matches your tone. Settings → Brand Voice:

- Business Name — Used in agent prompts and content generation

- Industry — 12 options (Tech, Marketing, Finance, Healthcare, etc.) to inform domain knowledge

- Brand Voice Prompt — Free-form instructions: tone, style, personality, things to avoid

- Target Audience — Who your content is aimed at

- Content Topics — Key subjects the AI should focus on

A dynamic preview shows how your brand voice configuration looks before saving.

Data & Export

All your data lives locally. From Settings → Data & Export:

- Export Data — Download a full JSON backup of all chats, personas, agents, crews, and settings

- Import Data — Restore from a previous backup file

- Clear All Data — Permanently delete everything (use with caution)

Keyboard Shortcuts

| Shortcut | Action |

|---|---|

Ctrl+N / Cmd+N | New chat session |

Enter | Send message |

Shift+Enter | New line in message |

Escape | Stop AI generation |

Data Storage

Everything is stored locally on your machine:

| What | Where |

|---|---|

| Database (chats, personas, agents, crews) | ~/.contextuai-solo/data/contextuai.db |

| Local AI models (GGUF files) | ~/.contextuai-solo/models/ |

| API keys & provider config | Browser localStorage |

| Data exports | Downloads folder |

No telemetry, no cloud calls (except to your chosen AI provider), no data collection.

Tech Stack

| Layer | Technology |

|---|---|

| Desktop Shell | Tauri v2 (Rust) — lightweight, secure, cross-platform |

| Frontend | React 19 + Vite + TypeScript 5.9 |

| Styling | Tailwind CSS + Framer Motion |

| Backend | FastAPI (Python 3.11+) |

| Database | SQLite via async adapter |

| Local Inference | llama-cpp-python (CPU-only) |

| AI Providers | Anthropic, OpenAI, Google, AWS Bedrock, Ollama |

| Agent Framework | Strands Agents SDK |

| Icons | Lucide React |

Troubleshooting

Backend won't start

- Verify Python 3.11+:

python --version - Install dependencies:

cd backend && pip install -r requirements.txt - Check port 18741 is free:

netstat -ano | findstr 18741(Windows) orlsof -i :18741(Mac/Linux)

Frontend won't start

- Verify Node.js 18+:

node --version - Clean install:

rm -rf node_modules && npm install - Windows rollup error:

npm install @rollup/rollup-win32-x64-msvc

Chat returns errors

- Verify API key in Settings → AI Providers → Test Connection

- If using Ollama, make sure it's running:

ollama serve - Check the backend terminal for error details

Local model download fails

- Check internet connection (download only — inference is fully offline)

- Ensure enough disk space in

~/.contextuai-solo/models/ - Try a smaller model first (Gemma 3 1B is ~700MB)

Telegram/Discord webhook not receiving messages

- Ensure ngrok is running:

ngrok http 18741 - Set the webhook URL in the platform's settings to your ngrok HTTPS URL

- Check Discord privileged intents are enabled (Message Content, Server Members)

Database reset

- Export your data first from Settings → Data & Export

- Delete

~/.contextuai-solo/data/contextuai.db - Restart the backend — it recreates the database with defaults