See ContextuAI Solo in Action

A quick walkthrough of the desktop experience

Everything you need to run AI locally. Bring your own API keys, connect your databases, build agent crews — all from your desktop. No cloud required.

A quick walkthrough of the desktop experience

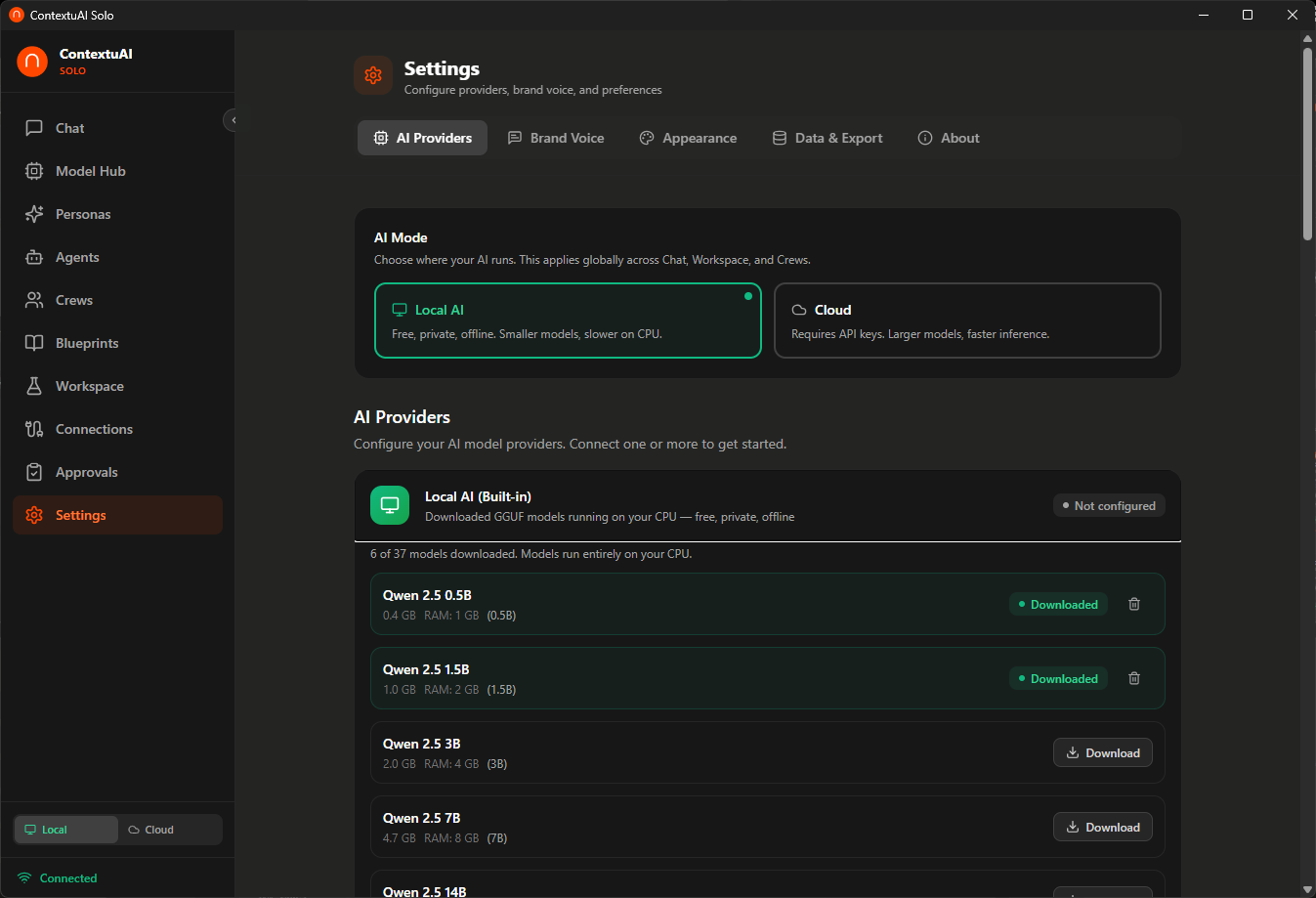

Connect Anthropic, OpenAI, Google, AWS Bedrock — or run free with 39 built-in local models including Gemma 4. Your keys, your models, your choice.

Built with Tauri + React + FastAPI. Lightweight native app that runs entirely on your machine. No server needed.

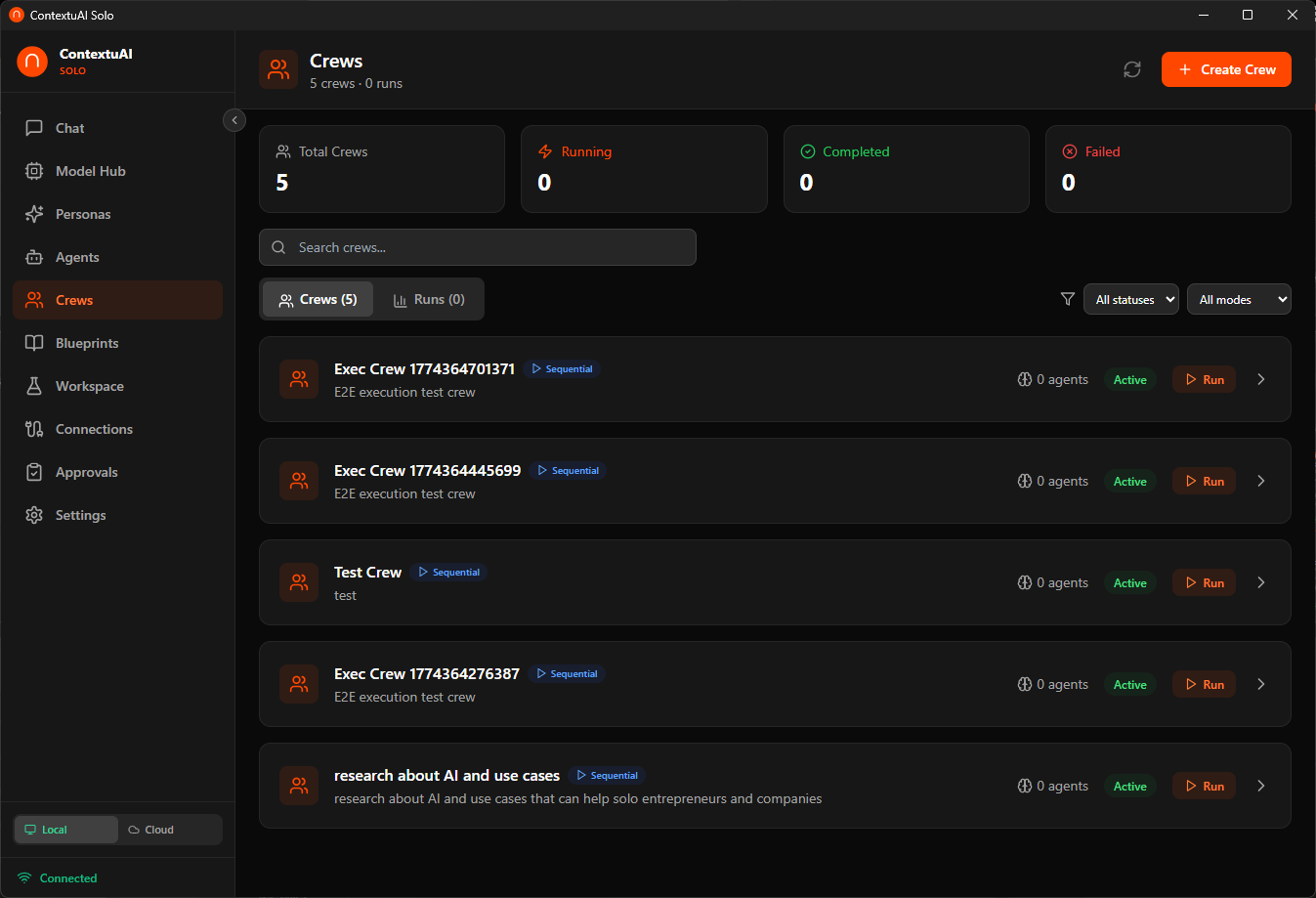

Create multi-agent teams with shared memory. Pre-built templates for marketing, research, and content workflows.

Multi-model conversations with session history

Database, GitHub, GitLab, Slack, MCP, API & more

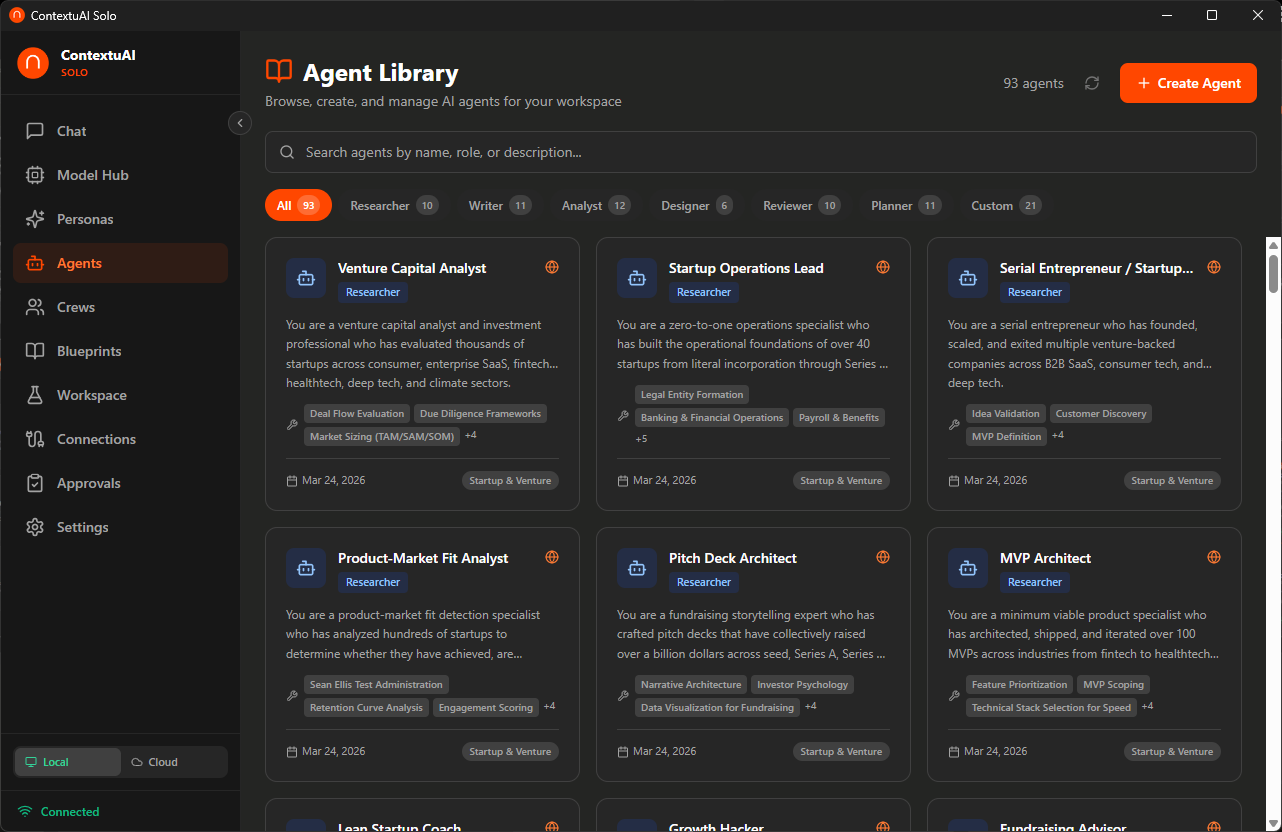

93 pre-built agents across 12 departments

Multi-agent projects with orchestration

Telegram, Discord, LinkedIn + 3 more coming soon

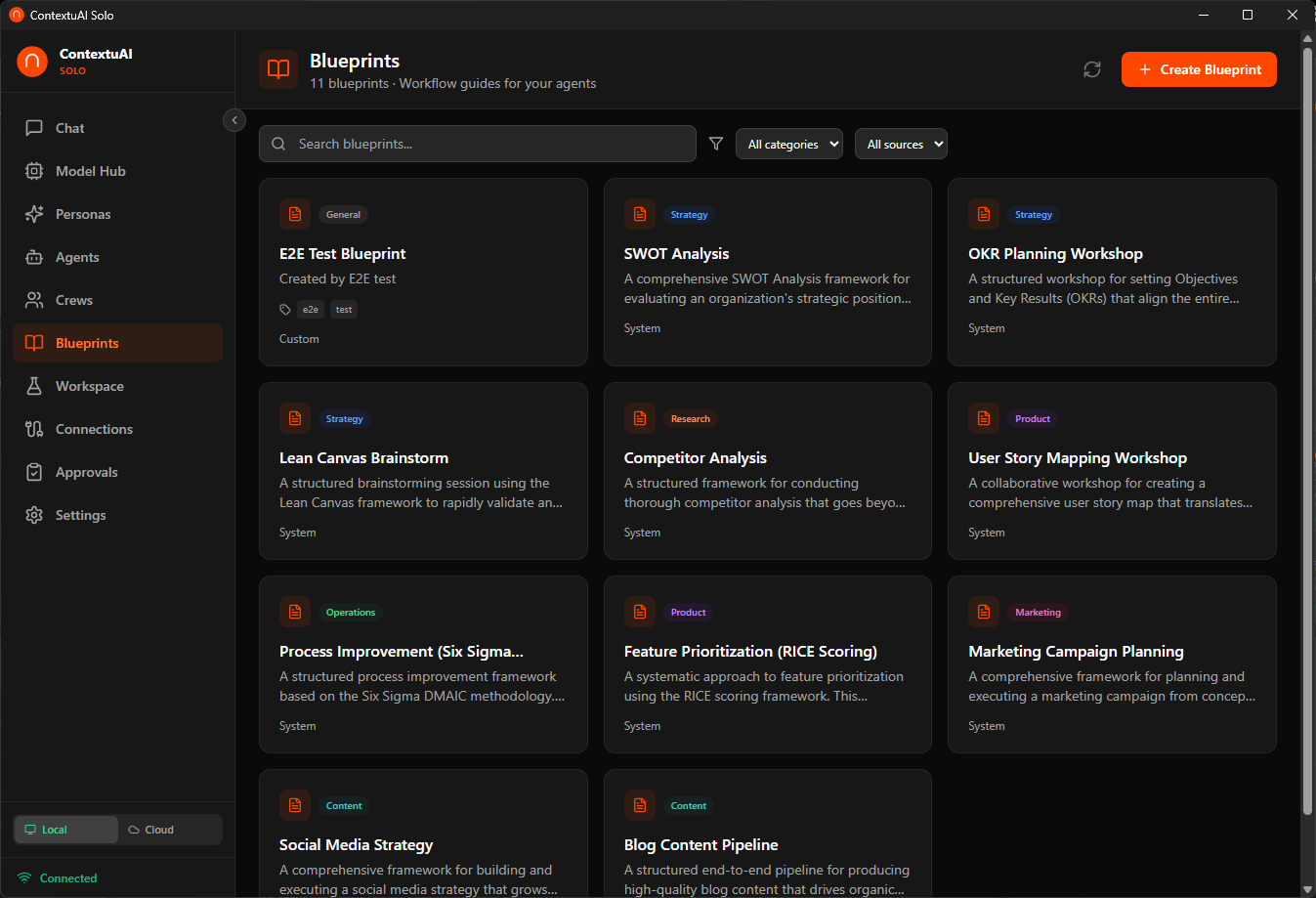

10 workshop + 5 team blueprints for common tasks

Human-in-the-loop review before AI sends messages

All data stays on your machine

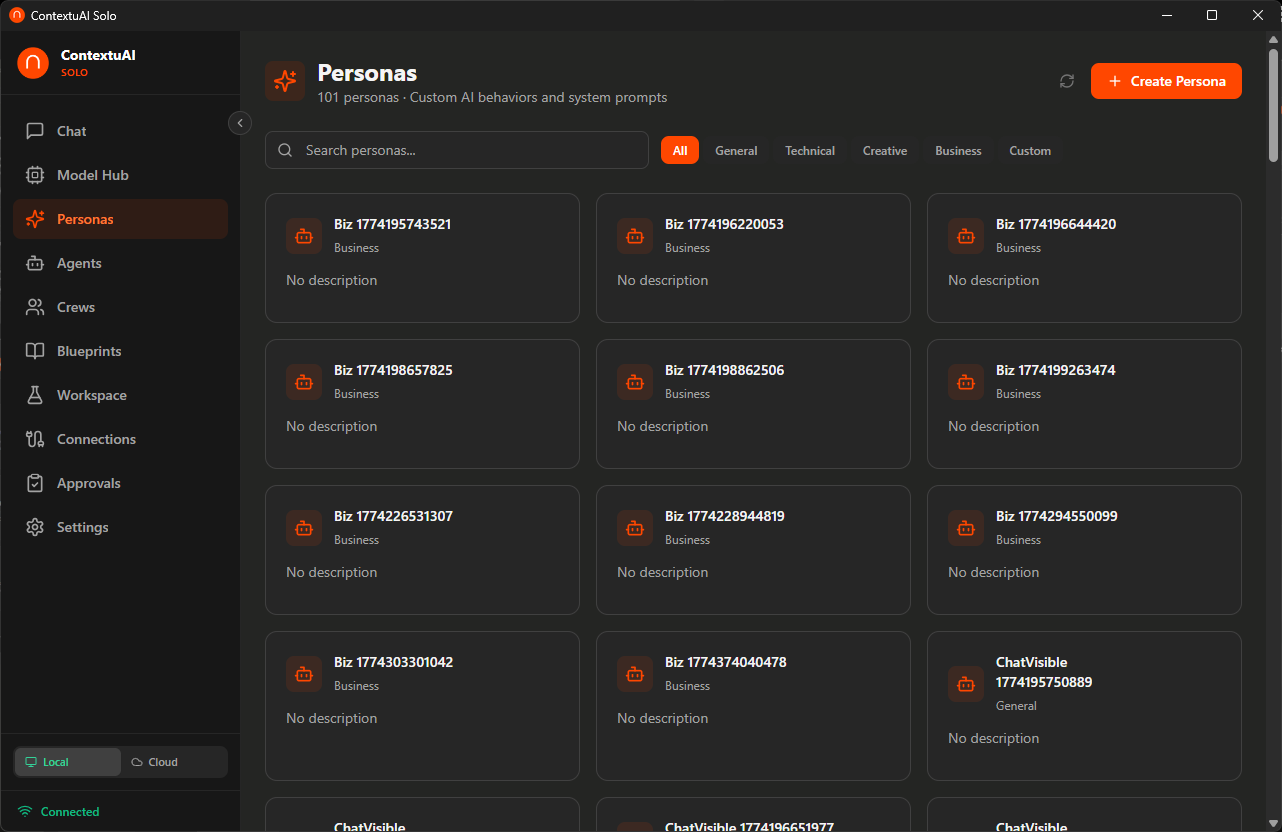

Not a generic chatbot — 93 specialized business agents across 12 departments. Each one is a domain expert with a tailored system prompt, recommended model, and relevant tools.

C-Suite, Marketing, Finance, Legal, HR, Design, Data, IT, Product, Startup, Operations, or Specialized

Each agent has a specialized system prompt — like hiring an expert consultant instantly

Get domain-expert responses — not generic AI, but a specialist who knows the field

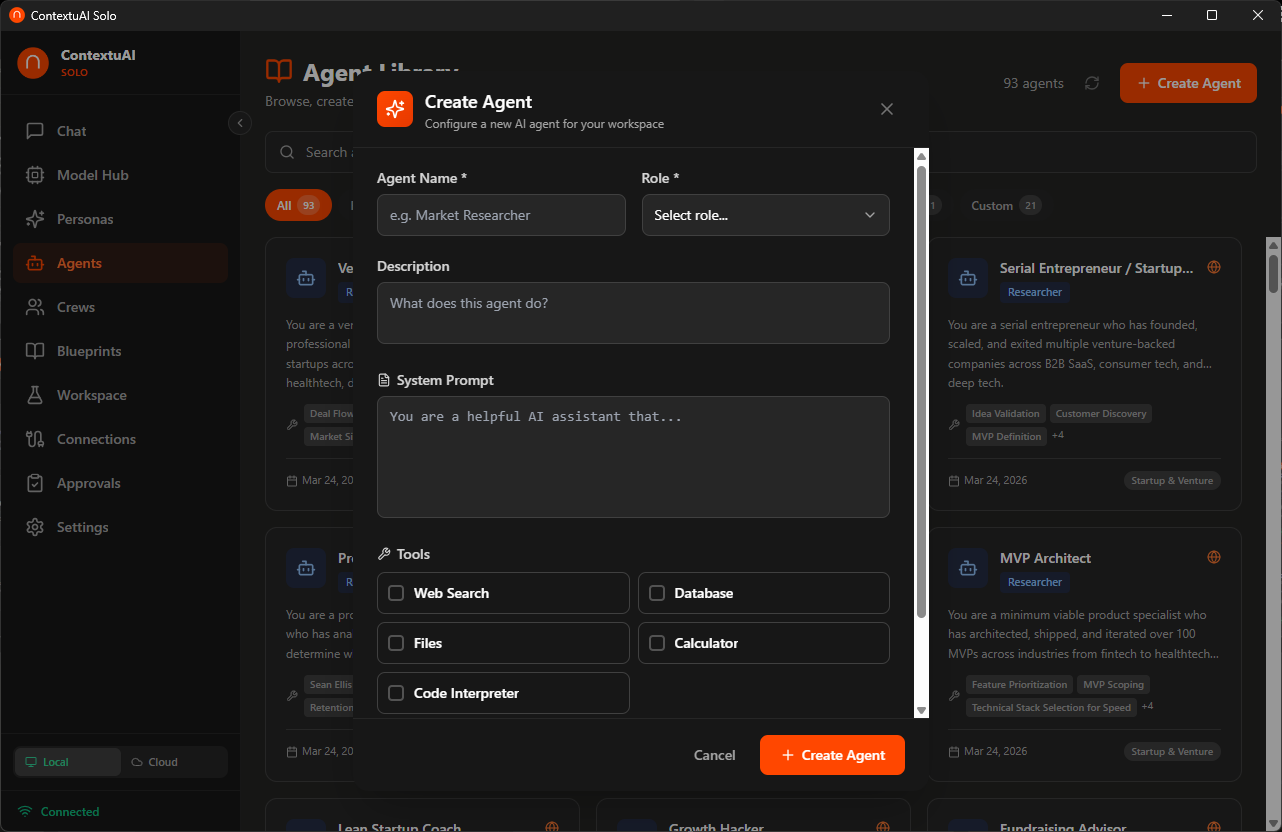

Create custom agents with tools, system prompts, and model selection

A 5-step wizard guides you through building multi-agent teams. Pick a blueprint, choose your AI model, assign agents, bind social channels, and launch — like hiring a department in one click.

Name your crew, pick a pre-built blueprint template, and choose your AI model

Sequential, Parallel, Pipeline, or Autonomous — then add specialists from the 93-agent library

Bind social channels (Telegram, Discord, LinkedIn, Twitter/X) directly to your crew, review, and create

One agent at a time. Output feeds into the next. Best for step-by-step workflows.

All agents run simultaneously. Best when tasks are independent.

Staged processing with checkpoints between phases.

Agents self-organize and delegate based on the task.

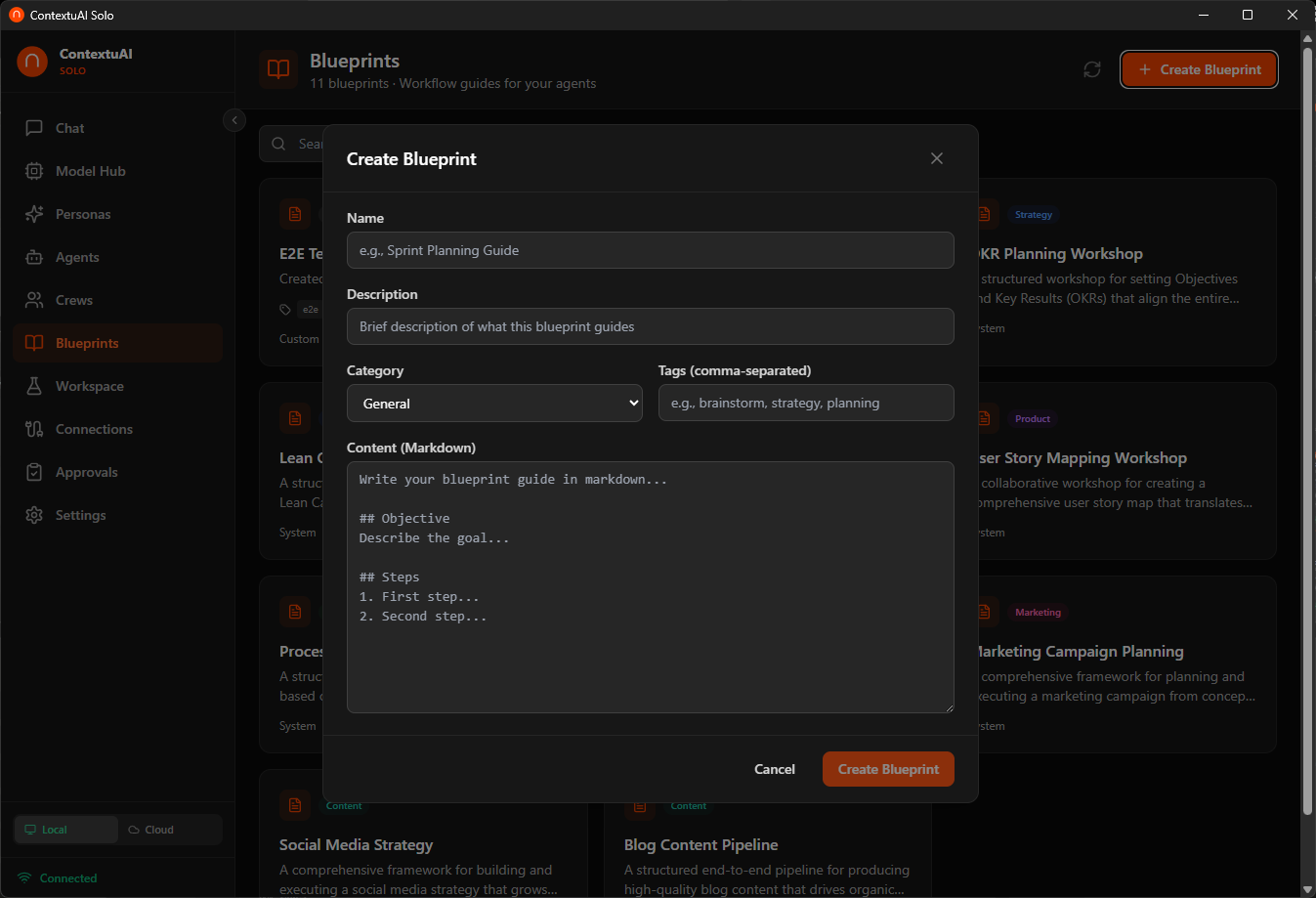

15 pre-built templates — 10 workshop blueprints and 5 team templates for the most common business tasks. Pick one when creating a crew or workspace project — agents, execution mode, and goals are pre-configured.

Multi-agent brainstorming sessions for strategic work

Pre-configured agent teams for engineering workflows

Create your own blueprint with agents, steps, and markdown content

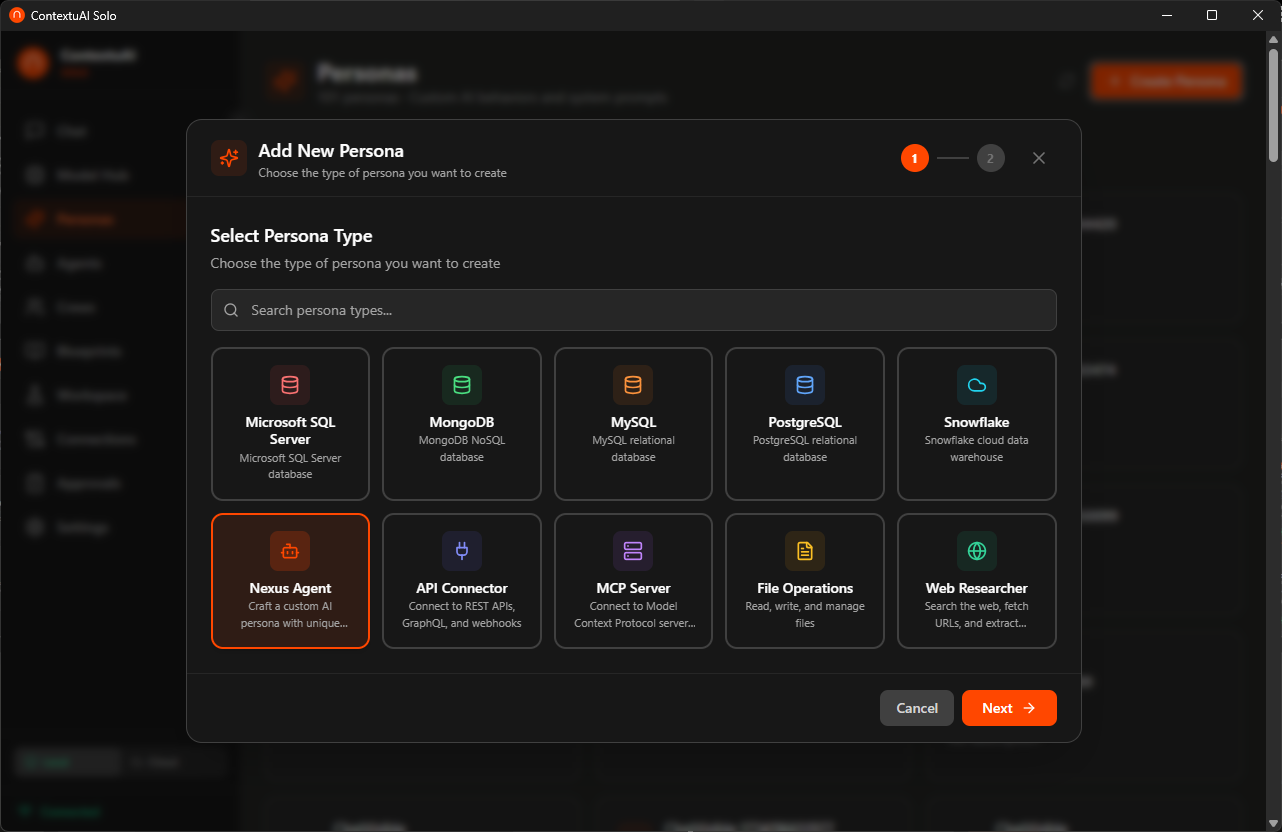

Create AI personas that connect to your real systems. Query your PostgreSQL database in plain English. Search the web. Call APIs. Connect GitHub, GitLab, Slack, and MCP servers. All through natural conversation.

Browse a searchable card grid — database connectors, MCP server, API, web researcher, file ops & more

Name your persona, add credentials, customize the system prompt — all stored locally in SQLite

"Show me top 10 customers by revenue" — AI writes the SQL, runs it, and explains the results

Wizard-based persona creation — pick a type, configure credentials, start chatting

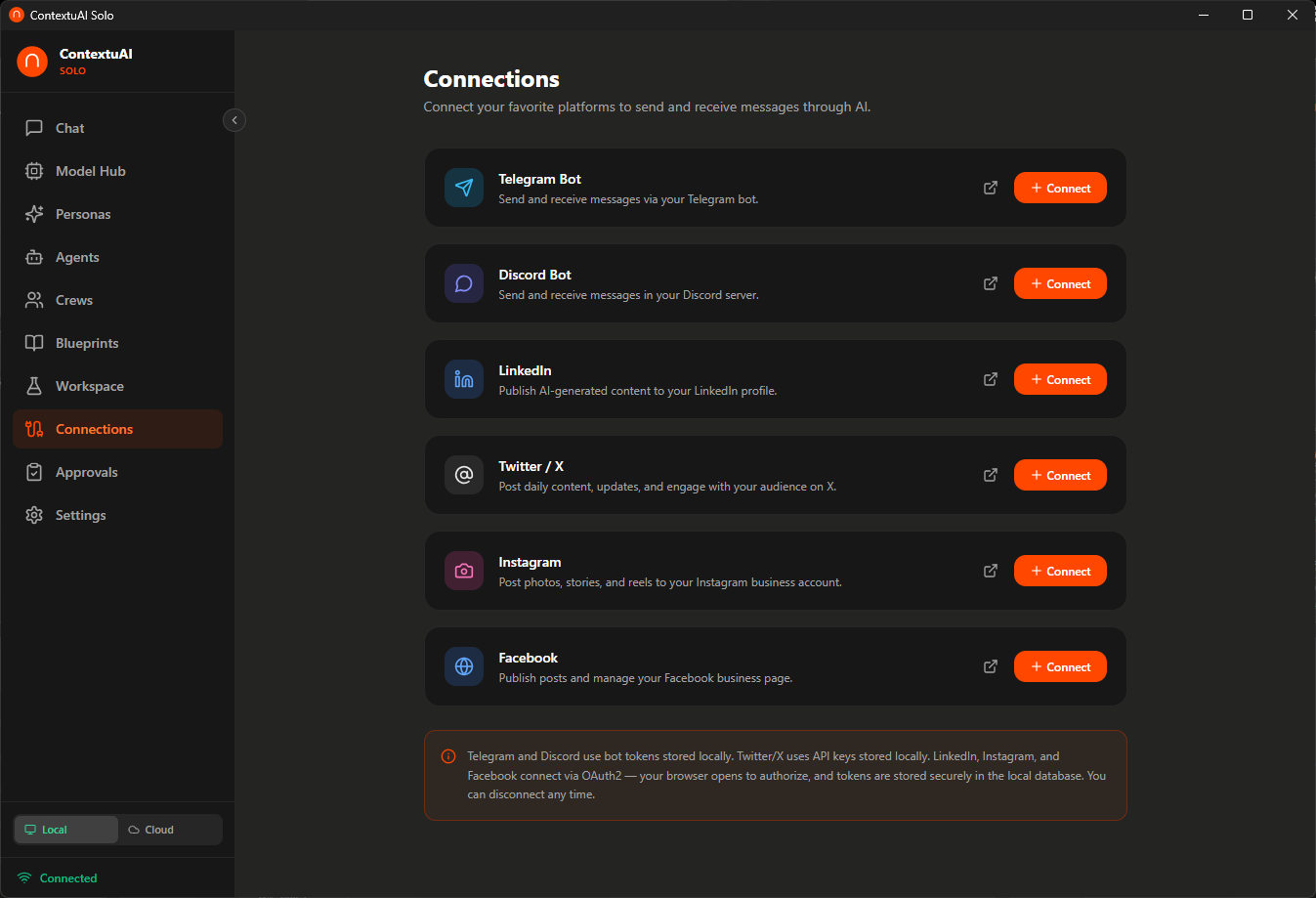

Connect your social media and messaging platforms. Telegram, Discord, and LinkedIn are live today — with Twitter/X, Instagram, and Facebook coming soon. Let your AI crews generate content and publish directly.

OAuth for LinkedIn, Instagram, Facebook — token paste for Telegram, Discord, Twitter/X

Use agents or crews to create posts, articles, images, and campaigns

Distribute to all connected platforms simultaneously — or schedule for later

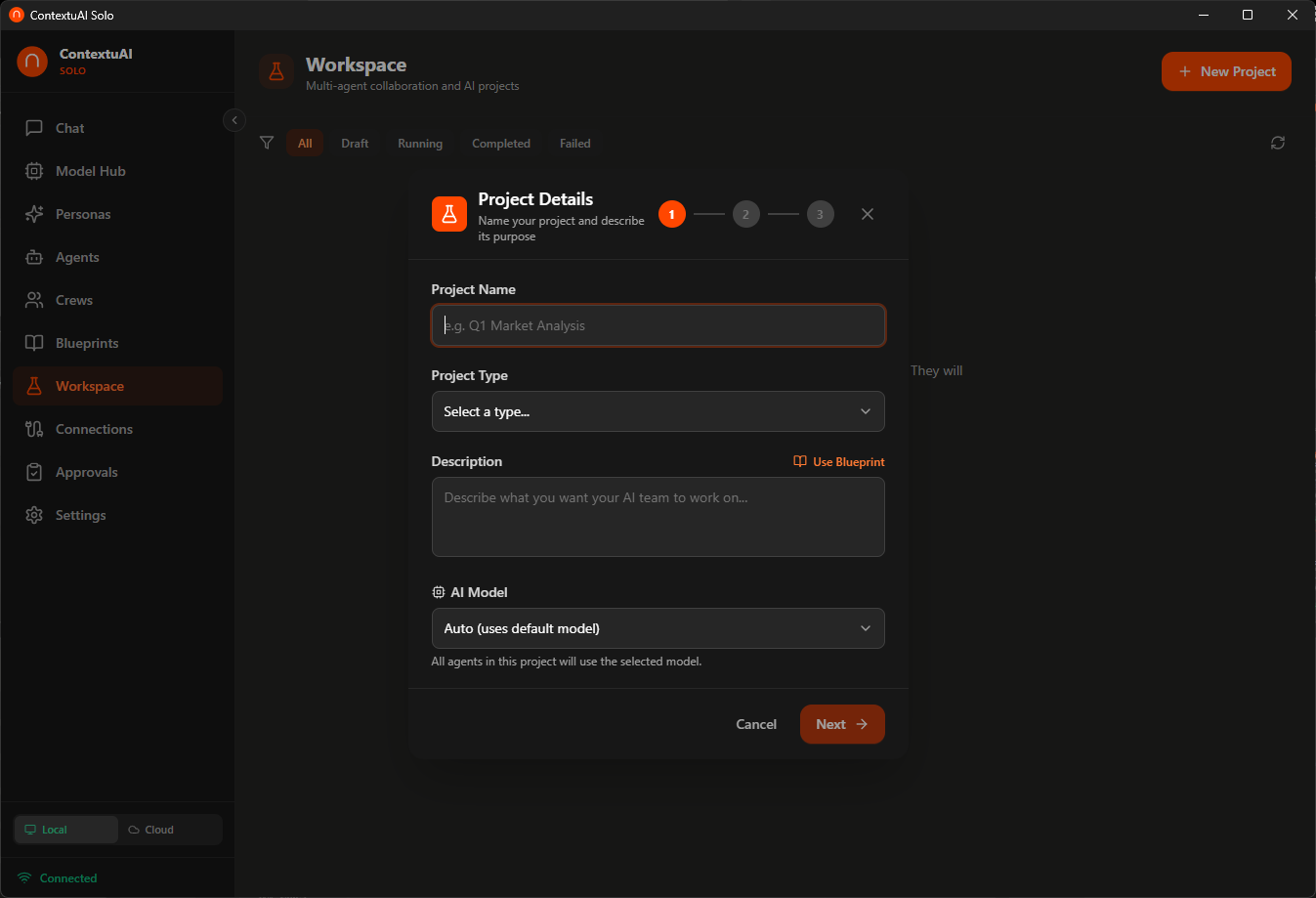

Create multi-agent projects with structured brainstorming. Pick a blueprint, assign agents, choose a model, and let your AI team collaborate — with artifacts you can export.

Name it, pick a type, select a blueprint template, and choose which AI model to use

Search the 93-agent library and add specialists to your project team

Agents collaborate on your task — review outputs and export artifacts

Step-by-step project wizard with blueprint integration and model selection

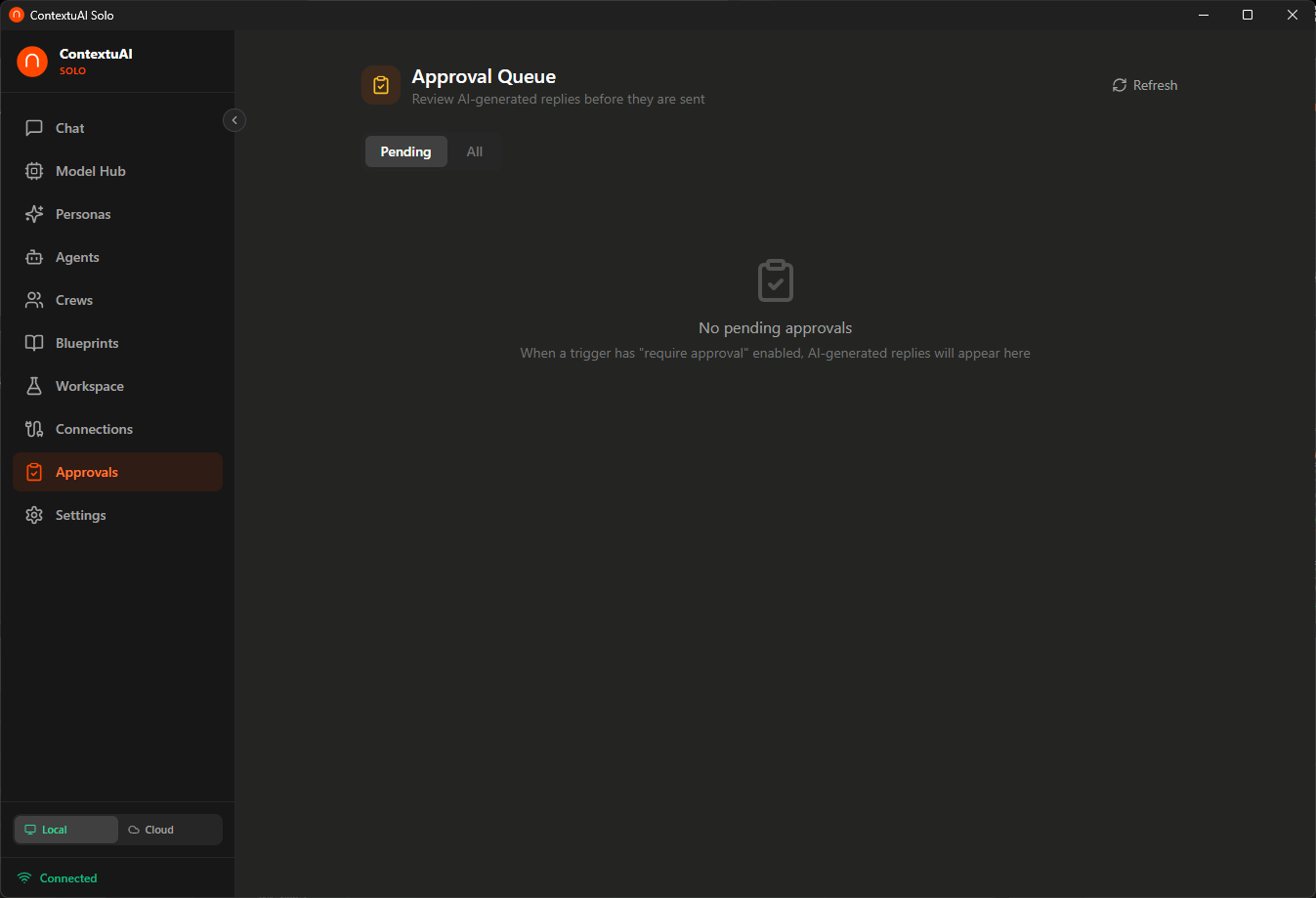

When crews or agents auto-reply to incoming messages, every response lands in your approval queue first. Review, edit, approve, or reject — nothing gets sent without your say-so.

Link a channel (Telegram, Discord, etc.) to a crew or agent with "require approval" enabled

Incoming messages are processed by your AI — the draft appears in the approval queue

Edit the response if needed, then approve to send — or reject to discard

Review AI-generated replies before they are sent — full control over every message

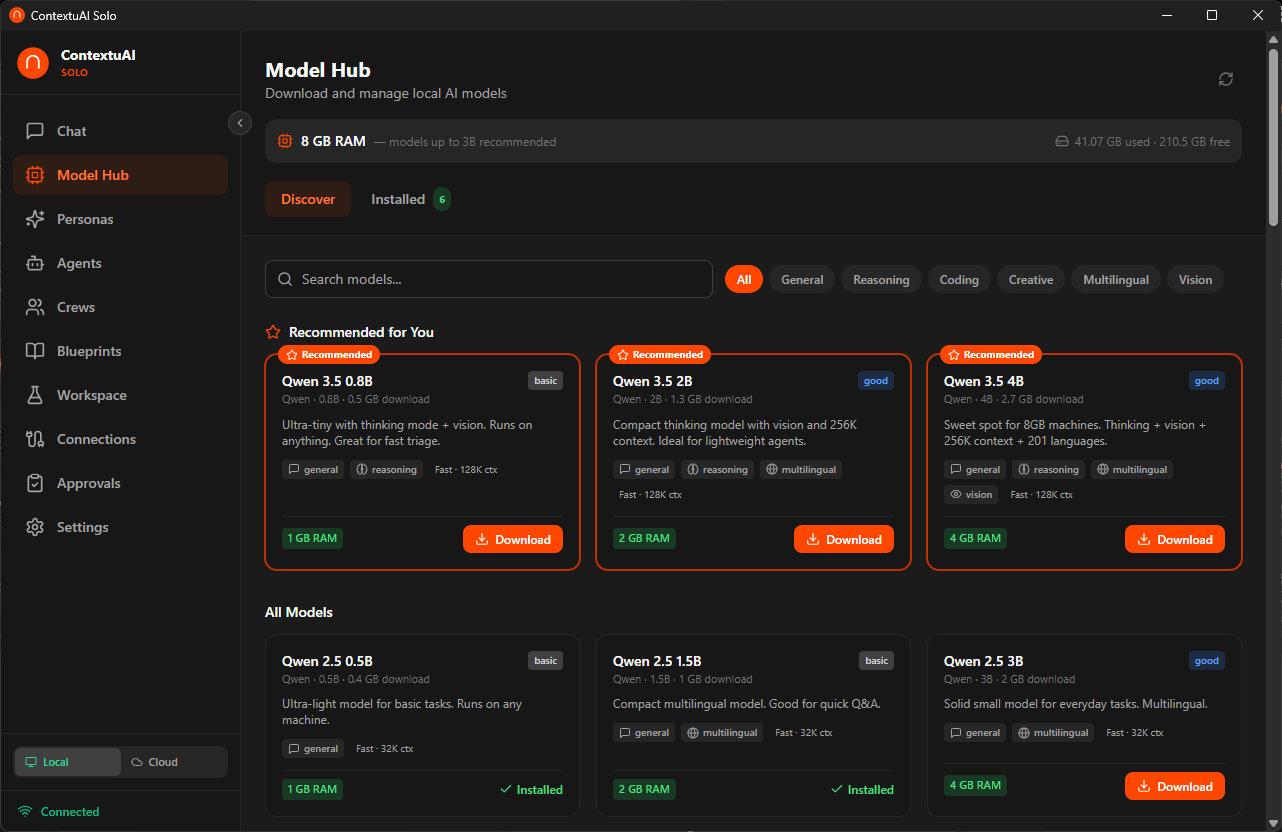

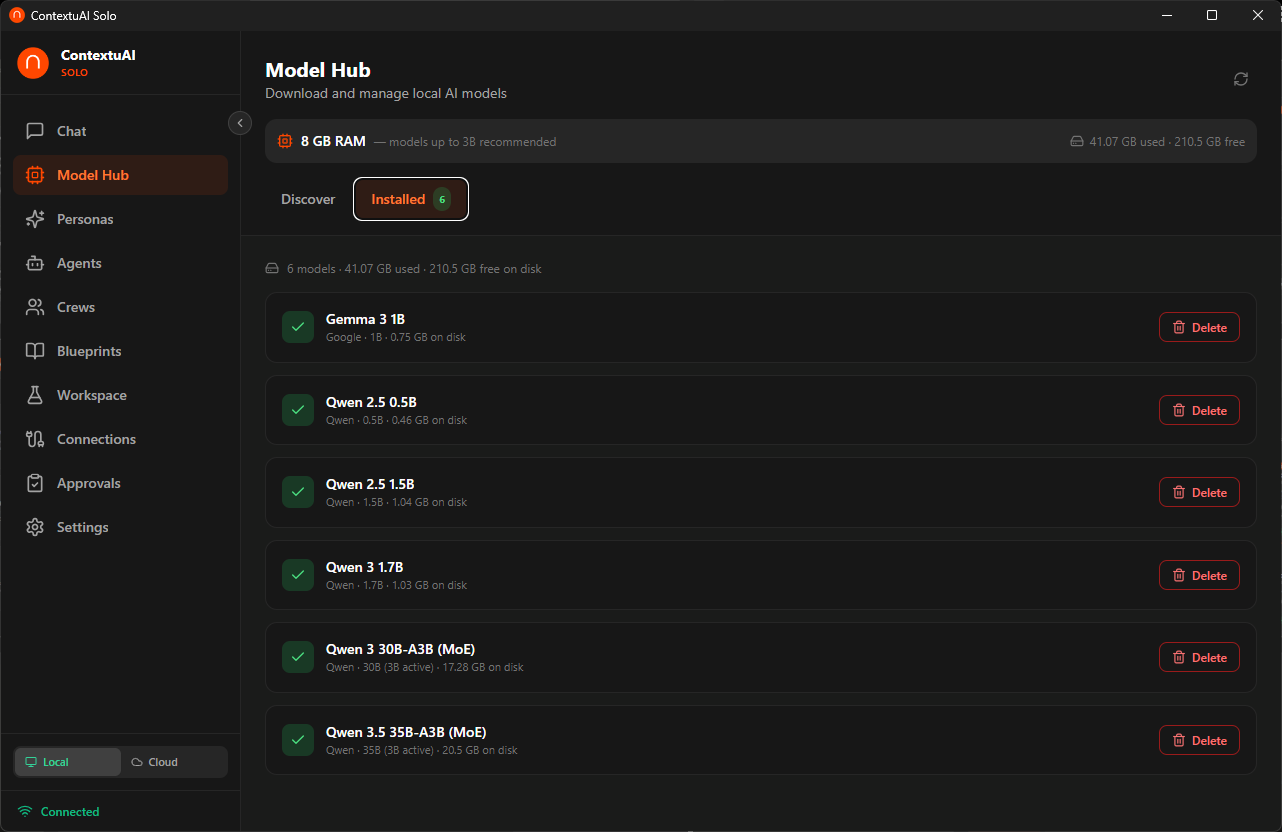

From tiny 0.5B models that run on any laptop to powerful 70B models for machines with more RAM — Solo auto-detects your hardware and recommends the right model. Download it. Click run. That's it. No API keys. No internet. No data leaves your machine. Ever.

Solo detects your RAM and highlights which models your machine can run

Pick a model, click download — real-time progress bar, stored locally in ~/.contextuai-solo/models/

Select your local model from the chat dropdown — same interface, zero cost, total privacy

| Your RAM | Models You Can Run |

|---|---|

| 4 GB | Qwen 3.5 0.8B, Gemma 3 1B, Llama 3.2 1B |

| 8 GB | Qwen 3 8B, DeepSeek R1 7B, Mistral 7B, Qwen 2.5 Coder 7B |

| 16 GB | Qwen 3 14B, Gemma 4 12B, Phi-4 14B, DeepSeek R1 14B, Qwen 2.5 Coder 14B |

| 32 GB | Qwen 3 32B, Gemma 4 27B, DeepSeek R1 32B, Gemma 3 27B, Qwen 2.5 Coder 32B |

| 48+ GB | Llama 3.1 70B, DeepSeek R1 70B |

9 model families covering general chat, reasoning, coding, creative writing, multilingual, and vision

Inference powered by llama-cpp-python — no GPU required, runs on any modern machine

Discover models — auto-detects your RAM and recommends what you can run

Manage installed models — sync status, storage usage, ready to chat

Solo exposes an OpenAI-compatible API endpoint on your localhost. Point your IDE at it and use Qwen 2.5 Coder or DeepSeek R1 as your local coding assistant — completely offline, completely free.

Grab Qwen 2.5 Coder (7B/14B/32B) or DeepSeek R1 from the Model Hub

An OpenAI-compatible endpoint goes live at localhost:18741/v1/chat/completions

VS Code, Cursor, Windsurf, or any tool that speaks OpenAI — just change the base URL

100% offline. Your code never leaves your machine. No telemetry, no cloud calls, no API charges.

Download ContextuAI Solo for free and start building with AI today.